Generative AI know-how is enhancing quickly, and it’s now attainable to generate textual content and pictures based mostly on textual content enter. Stable Diffusion is a text-to-image mannequin that empowers you to create photorealistic functions. You’ll be able to simply generate pictures from textual content utilizing Steady Diffusion fashions by Amazon SageMaker JumpStart.

The next are examples of enter texts and the corresponding output pictures generated by Steady Diffusion. The inputs are “A boxer dancing on a desk,” “A woman on the seashore in swimming put on, water coloration model,” and “A canine in a go well with.”

Though generative AI options are highly effective and helpful, they may also be weak to manipulation and abuse. Prospects utilizing them for picture era should prioritize content material moderation to guard their customers, platform, and model by implementing sturdy moderation practices to create a secure and constructive person expertise whereas safeguarding their platform and model fame.

On this put up, we discover utilizing AWS AI companies Amazon Rekognition and Amazon Comprehend, together with different methods, to successfully average Steady Diffusion model-generated content material in near-real time. To learn to launch and generate pictures from textual content utilizing a Steady Diffusion mannequin on AWS, check with Generate images from text with the stable diffusion model on Amazon SageMaker JumpStart.

Answer overview

Amazon Rekognition and Amazon Comprehend are managed AI companies that present pre-trained and customizable ML fashions through an API interface, eliminating the necessity for machine studying (ML) experience. Amazon Rekognition Content material Moderation automates and streamlines picture and video moderation. Amazon Comprehend makes use of ML to research textual content and uncover beneficial insights and relationships.

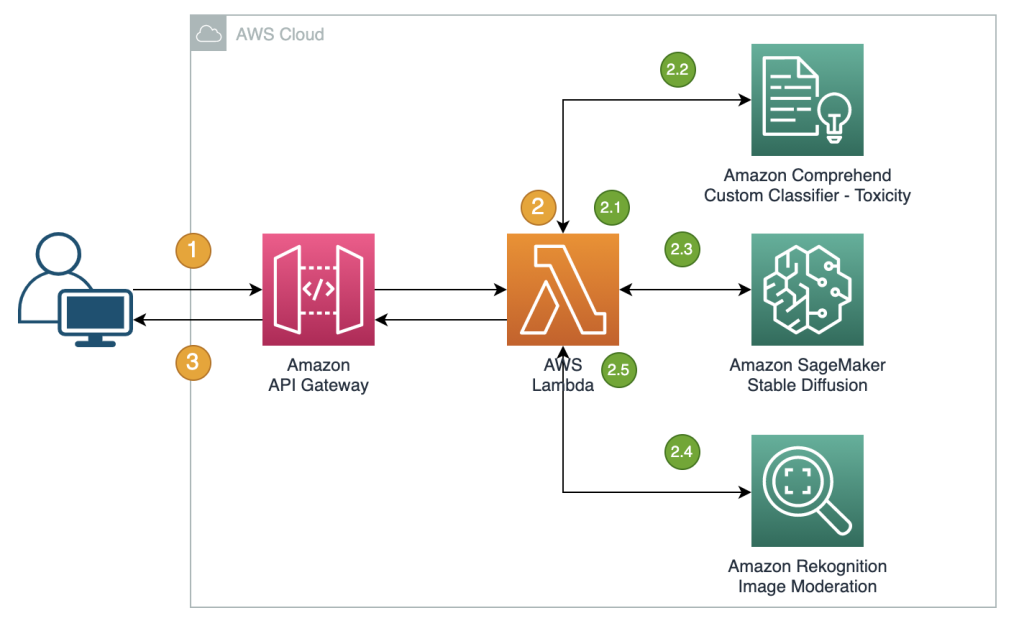

The next reference illustrates the creation of a RESTful proxy API for moderating Steady Diffusion text-to-image model-generated pictures in near-real time. On this resolution, we launched and deployed a Steady Diffusion mannequin (v2-1 base) utilizing JumpStart. The answer makes use of unfavorable prompts and textual content moderation options corresponding to Amazon Comprehend and a rule-based filter to average enter prompts. It additionally makes use of Amazon Rekognition to average the generated pictures. The RESTful API will return the generated picture and the moderation warnings to the consumer if unsafe data is detected.

The steps within the workflow are as follows:

- The person ship a immediate to generate a picture.

- An AWS Lambda perform coordinates picture era and moderation utilizing Amazon Comprehend, JumpStart, and Amazon Rekognition:

- Apply a rule-based situation to enter prompts in Lambda features, imposing content material moderation with forbidden phrase detection.

- Use the Amazon Comprehend customized classifier to research the immediate textual content for toxicity classification.

- Ship the immediate to the Steady Diffusion mannequin by the SageMaker endpoint, passing each the prompts as person enter and unfavorable prompts from a predefined checklist.

- Ship the picture bytes returned from the SageMaker endpoint to the Amazon Rekognition

DetectModerationLabelAPI for picture moderation. - Assemble a response message that features picture bytes and warnings if the earlier steps detected any inappropriate data within the immediate or generative picture.

- Ship the response again to the consumer.

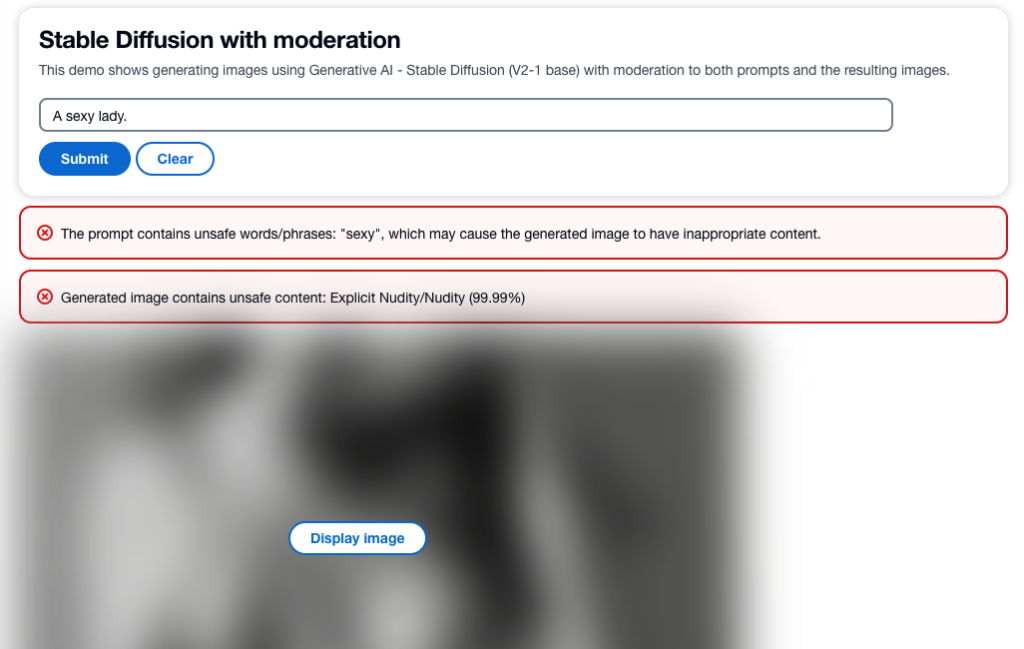

The next screenshot reveals a pattern app constructed utilizing the described structure. The online UI sends person enter prompts to the RESTful proxy API and shows the picture and any moderation warnings acquired within the response. The demo app blurs the precise generated picture if it comprises unsafe content material. We examined the app with the pattern immediate “A horny woman.”

You’ll be able to implement extra refined logic for a greater person expertise, corresponding to rejecting the request if the prompts include unsafe data. Moreover, you could possibly have a retry coverage to regenerate the picture if the immediate is secure, however the output is unsafe.

Predefine a listing of unfavorable prompts

Steady Diffusion helps unfavorable prompts, which helps you to specify prompts to keep away from throughout picture era. Making a predefined checklist of unfavorable prompts is a sensible and proactive strategy to stop the mannequin from producing unsafe pictures. By together with prompts like “bare,” “attractive,” and “nudity,” that are identified to result in inappropriate or offensive pictures, the mannequin can acknowledge and keep away from them, decreasing the danger of producing unsafe content material.

The implementation might be managed within the Lambda perform when calling the SageMaker endpoint to run inference of the Steady Diffusion mannequin, passing each the prompts from person enter and the unfavorable prompts from a predefined checklist.

Though this strategy is efficient, it might influence the outcomes generated by the Steady Diffusion mannequin and restrict its performance. It’s vital to think about it as one of many moderation methods, mixed with different approaches corresponding to textual content and picture moderation utilizing Amazon Comprehend and Amazon Rekognition.

Average enter prompts

A typical strategy to textual content moderation is to make use of a rule-based key phrase lookup technique to determine whether or not the enter textual content comprises any forbidden phrases or phrases from a predefined checklist. This technique is comparatively straightforward to implement, with minimal efficiency influence and decrease prices. Nonetheless, the key disadvantage of this strategy is that it’s restricted to solely detecting phrases included within the predefined checklist and might’t detect new or modified variations of forbidden phrases not included within the checklist. Customers may try to bypass the foundations through the use of different spellings or particular characters to interchange letters.

To deal with the constraints of a rule-based textual content moderation, many options have adopted a hybrid strategy that mixes rule-based key phrase lookup with ML-based toxicity detection. The mix of each approaches permits for a extra complete and efficient textual content moderation resolution, able to detecting a wider vary of inappropriate content material and enhancing the accuracy of moderation outcomes.

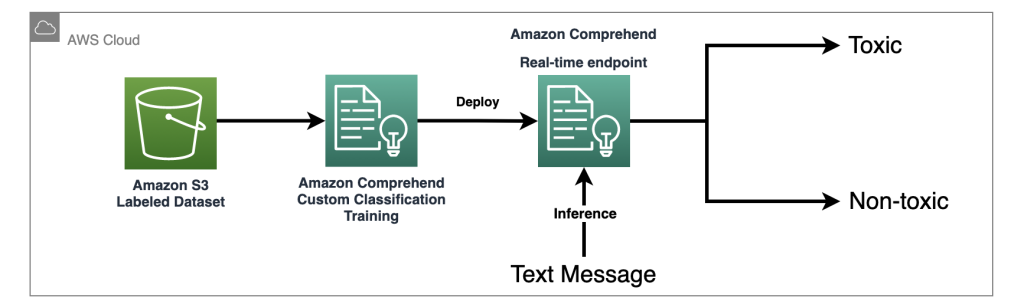

On this resolution, we use an Amazon Comprehend custom classifier to coach a toxicity detection mannequin, which we use to detect probably dangerous content material in enter prompts in circumstances the place no express forbidden phrases are detected. With the ability of machine studying, we are able to educate the mannequin to acknowledge patterns in textual content that will point out toxicity, even when such patterns aren’t simply detectable by a rule-based strategy.

With Amazon Comprehend as a managed AI service, coaching and inference are simplified. You’ll be able to simply practice and deploy Amazon Comprehend customized classification with simply two steps. Try our workshop lab for extra details about the toxicity detection mannequin utilizing an Amazon Comprehend customized classifier. The lab gives a step-by-step information to creating and integrating a customized toxicity classifier into your utility. The next diagram illustrates this resolution structure.

This pattern classifier makes use of a social media coaching dataset and performs binary classification. Nonetheless, you probably have extra particular necessities in your textual content moderation wants, think about using a extra tailor-made dataset to coach your Amazon Comprehend customized classifier.

Average output pictures

Though moderating enter textual content prompts is vital, it doesn’t assure that each one pictures generated by the Steady Diffusion mannequin will probably be secure for the supposed viewers, as a result of the mannequin’s outputs can include a sure degree of randomness. Subsequently, it’s equally vital to average the photographs generated by the Steady Diffusion mannequin.

On this resolution, we make the most of Amazon Rekognition Content Moderation, which employs pre-trained ML fashions, to detect inappropriate content material in pictures and movies. On this resolution, we use the Amazon Rekognition DetectModerationLabel API to average pictures generated by the Steady Diffusion mannequin in near-real time. Amazon Rekognition Content material Moderation gives pre-trained APIs to research a variety of inappropriate or offensive content material, corresponding to violence, nudity, hate symbols, and extra. For a complete checklist of Amazon Rekognition Content material Moderation taxonomies, check with Moderating content.

The next code demonstrates learn how to name the Amazon Rekognition DetectModerationLabel API to average pictures inside an Lambda perform utilizing the Python Boto3 library. This perform takes the picture bytes returned from SageMaker and sends them to the Picture Moderation API for moderation.

For extra examples of the Amazon Rekognition Picture Moderation API, check with our Content Moderation Image Lab.

Efficient picture moderation methods for fine-tuning fashions

Fantastic-tuning is a typical method used to adapt pre-trained fashions to particular duties. Within the case of Steady Diffusion, fine-tuning can be utilized to generate pictures that incorporate particular objects, types, and characters. Content material moderation is important when coaching a Steady Diffusion mannequin to stop the creation of inappropriate or offensive pictures. This includes fastidiously reviewing and filtering out any knowledge that might result in the era of such pictures. By doing so, the mannequin learns from a extra various and consultant vary of knowledge factors, enhancing its accuracy and stopping the propagation of dangerous content material.

JumpStart makes fine-tuning the Steady Diffusion Mannequin straightforward by offering the switch studying scripts utilizing the DreamBooth technique. You simply want to arrange your coaching knowledge, outline the hyperparameters, and begin the coaching job. For extra particulars, check with Fine-tune text-to-image Stable Diffusion models with Amazon SageMaker JumpStart.

The dataset for fine-tuning must be a single Amazon Simple Storage Service (Amazon S3) listing together with your pictures and occasion configuration file dataset_info.json, as proven within the following code. The JSON file will affiliate the photographs with the occasion immediate like this: {'instance_prompt':<<instance_prompt>>}.

Clearly, you may manually evaluate and filter the photographs, however this may be time-consuming and even impractical if you do that at scale throughout many initiatives and groups. In such circumstances, you may automate a batch course of to centrally verify all the photographs in opposition to the Amazon Rekognition DetectModerationLabel API and routinely flag or take away pictures in order that they don’t contaminate your coaching.

Moderation latency and value

On this resolution, a sequential sample is used to average textual content and pictures. A rule-based perform and Amazon Comprehend are known as for textual content moderation, and Amazon Rekognition is used for picture moderation, each earlier than and after invoking Steady Diffusion. Though this strategy successfully moderates enter prompts and output pictures, it might improve the general value and latency of the answer, which is one thing to think about.

Latency

Each Amazon Rekognition and Amazon Comprehend provide managed APIs which can be extremely out there and have built-in scalability. Regardless of potential latency variations attributable to enter dimension and community pace, the APIs used on this resolution from each companies provide near-real-time inference. Amazon Comprehend customized classifier endpoints can provide a pace of lower than 200 milliseconds for enter textual content sizes of lower than 100 characters, whereas the Amazon Rekognition Picture Moderation API serves roughly 500 milliseconds for common file sizes of lower than 1 MB. (The outcomes are based mostly on the check carried out utilizing the pattern utility, which qualifies as a near-real-time requirement.)

In complete, the moderation API calls to Amazon Rekognition and Amazon Comprehend will add as much as 700 milliseconds to the API name. It’s vital to notice that the Steady Diffusion request normally takes longer relying on the complexity of the prompts and the underlying infrastructure functionality. Within the check account, utilizing an occasion sort of ml.p3.2xlarge, the common response time for the Steady Diffusion mannequin through a SageMaker endpoint was round 15 seconds. Subsequently, the latency launched by moderation is roughly 5% of the general response time, making it a minimal influence on the general efficiency of the system.

Price

The Amazon Rekognition Picture Moderation API employs a pay-as-you-go mannequin based mostly on the variety of requests. The price varies relying on the AWS Area used and follows a tiered pricing construction. As the quantity of requests will increase, the price per request decreases. For extra data, check with Amazon Rekognition pricing.

On this resolution, we utilized an Amazon Comprehend customized classifier and deployed it as an Amazon Comprehend endpoint to facilitate real-time inference. This implementation incurs each a one-time coaching value and ongoing inference prices. For detailed data, check with Amazon Comprehend Pricing.

Jumpstart allows you to rapidly launch and deploy the Steady Diffusion mannequin as a single package deal. Operating inference on the Steady Diffusion mannequin will incur prices for the underlying Amazon Elastic Compute Cloud (Amazon EC2) occasion in addition to inbound and outbound knowledge switch. For detailed data, check with Amazon SageMaker Pricing.

Abstract

On this put up, we supplied an summary of a pattern resolution that showcases learn how to average Steady Diffusion enter prompts and output pictures utilizing Amazon Comprehend and Amazon Rekognition. Moreover, you may outline unfavorable prompts in Steady Diffusion to stop producing unsafe content material. By implementing a number of moderation layers, the danger of manufacturing unsafe content material might be significantly decreased, guaranteeing a safer and extra reliable person expertise.

Be taught extra about content moderation on AWS and our content moderation ML use cases, and take step one in the direction of streamlining your content material moderation operations with AWS.

In regards to the Authors

Lana Zhang is a Senior Options Architect at AWS WWSO AI Providers workforce, specializing in AI and ML for content material moderation, laptop imaginative and prescient, and pure language processing. Together with her experience, she is devoted to selling AWS AI/ML options and helping prospects in remodeling their enterprise options throughout various industries, together with social media, gaming, e-commerce, and promoting & advertising and marketing.

Lana Zhang is a Senior Options Architect at AWS WWSO AI Providers workforce, specializing in AI and ML for content material moderation, laptop imaginative and prescient, and pure language processing. Together with her experience, she is devoted to selling AWS AI/ML options and helping prospects in remodeling their enterprise options throughout various industries, together with social media, gaming, e-commerce, and promoting & advertising and marketing.

James Wu is a Senior AI/ML Specialist Answer Architect at AWS. serving to prospects design and construct AI/ML options. James’s work covers a variety of ML use circumstances, with a main curiosity in laptop imaginative and prescient, deep studying, and scaling ML throughout the enterprise. Previous to becoming a member of AWS, James was an architect, developer, and know-how chief for over 10 years, together with 6 years in engineering and 4 years in advertising and marketing and promoting industries.

James Wu is a Senior AI/ML Specialist Answer Architect at AWS. serving to prospects design and construct AI/ML options. James’s work covers a variety of ML use circumstances, with a main curiosity in laptop imaginative and prescient, deep studying, and scaling ML throughout the enterprise. Previous to becoming a member of AWS, James was an architect, developer, and know-how chief for over 10 years, together with 6 years in engineering and 4 years in advertising and marketing and promoting industries.

Kevin Carlson is a Principal AI/ML Specialist with a concentrate on Laptop Imaginative and prescient at AWS, the place he leads Enterprise Improvement and GTM for Amazon Rekognition. Previous to becoming a member of AWS, he led Digital Transformation globally at Fortune 500 Engineering firm AECOM, with a concentrate on synthetic intelligence and machine studying for generative design and infrastructure evaluation. He’s based mostly in Chicago, the place exterior of labor he enjoys time together with his household, and is obsessed with flying airplanes and training youth baseball.

Kevin Carlson is a Principal AI/ML Specialist with a concentrate on Laptop Imaginative and prescient at AWS, the place he leads Enterprise Improvement and GTM for Amazon Rekognition. Previous to becoming a member of AWS, he led Digital Transformation globally at Fortune 500 Engineering firm AECOM, with a concentrate on synthetic intelligence and machine studying for generative design and infrastructure evaluation. He’s based mostly in Chicago, the place exterior of labor he enjoys time together with his household, and is obsessed with flying airplanes and training youth baseball.

John Rouse is a Senior AI/ML Specialist at AWS, the place he leads international enterprise growth for AI companies centered on Content material Moderation and Compliance use circumstances. Previous to becoming a member of AWS, he has held senior degree enterprise growth and management roles with innovative know-how corporations. John is working to place machine studying within the palms of each developer with AWS AI/ML stack. Small concepts result in small influence. John’s objective for purchasers is to empower them with massive concepts and alternatives that open doorways to allow them to make a serious influence with their buyer.

John Rouse is a Senior AI/ML Specialist at AWS, the place he leads international enterprise growth for AI companies centered on Content material Moderation and Compliance use circumstances. Previous to becoming a member of AWS, he has held senior degree enterprise growth and management roles with innovative know-how corporations. John is working to place machine studying within the palms of each developer with AWS AI/ML stack. Small concepts result in small influence. John’s objective for purchasers is to empower them with massive concepts and alternatives that open doorways to allow them to make a serious influence with their buyer.