Imaginative and prescient-language foundational fashions are constructed on the premise of a single pre-training adopted by subsequent adaptation to a number of downstream duties. Two fundamental and disjoint coaching eventualities are standard: a CLIP-style contrastive studying and next-token prediction. Contrastive studying trains the mannequin to foretell if image-text pairs appropriately match, successfully constructing visible and textual content representations for the corresponding picture and textual content inputs, whereas next-token prediction predicts the almost definitely subsequent textual content token in a sequence, thus studying to generate textual content, in keeping with the required job. Contrastive learning allows image-text and text-image retrieval tasks, equivalent to discovering the picture that greatest matches a sure description, and next-token studying allows text-generative duties, equivalent to Image Captioning and Visual Question Answering (VQA). Whereas each approaches have demonstrated highly effective outcomes, when a mannequin is pre-trained contrastively, it sometimes doesn’t fare properly on text-generative duties and vice-versa. Moreover, adaptation to different duties is usually finished with complicated or inefficient strategies. For instance, in an effort to prolong a vision-language mannequin to movies, some fashions must do inference for every video body individually. This limits the scale of the movies that may be processed to just a few frames and doesn’t absolutely benefit from movement info accessible throughout frames.

Motivated by this, we current “A Simple Architecture for Joint Learning for MultiModal Tasks”, referred to as MaMMUT, which is ready to practice collectively for these competing goals and which offers a basis for a lot of vision-language duties both immediately or by way of easy adaptation. MaMMUT is a compact, 2B-parameter multimodal mannequin that trains throughout contrastive, textual content generative, and localization-aware goals. It consists of a single picture encoder and a textual content decoder, which permits for a direct reuse of each parts. Moreover, an easy adaptation to video-text duties requires solely utilizing the picture encoder as soon as and may deal with many extra frames than prior work. According to current language fashions (e.g., PaLM, GLaM, GPT3), our structure makes use of a decoder-only textual content mannequin and might be considered a easy extension of language fashions. Whereas modest in measurement, our mannequin outperforms the cutting-edge or achieves aggressive efficiency on image-text and text-image retrieval, video question answering (VideoQA), video captioning, open-vocabulary detection, and VQA.

|

|

Decoder-only mannequin structure

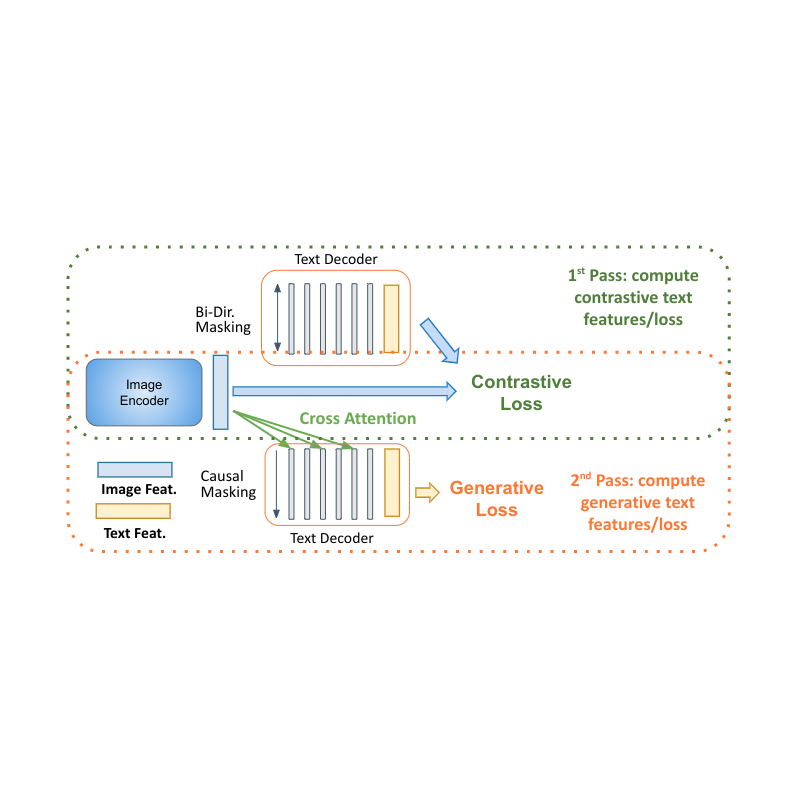

One shocking discovering is {that a} single language-decoder is enough for all these duties, which obviates the necessity for each complicated constructs and coaching procedures introduced earlier than. For instance, our mannequin (introduced to the left within the determine under) consists of a single visible encoder and single text-decoder, linked by way of cross attention, and trains concurrently on each contrastive and text-generative varieties of losses. Comparatively, prior work is both not in a position to deal with image-text retrieval duties, or applies just some losses to just some elements of the mannequin. To allow multimodal duties and absolutely benefit from the decoder-only mannequin, we have to collectively practice each contrastive losses and text-generative captioning-like losses.

|

| MaMMUT structure (left) is a straightforward assemble consisting of a single imaginative and prescient encoder and a single textual content decoder. In comparison with different standard vision-language fashions — e.g., PaLI (center) and ALBEF, CoCa (proper) — it trains collectively and effectively for a number of vision-language duties, with each contrastive and text-generative losses, absolutely sharing the weights between the duties. |

Decoder two-pass studying

Decoder-only models for language studying present clear benefits in efficiency with smaller mannequin measurement (nearly half the parameters). The principle problem for making use of them to multimodal settings is to unify the contrastive studying (which makes use of unconditional sequence-level illustration) with captioning (which optimizes the probability of a token conditioned on the earlier tokens). We suggest a two-pass method to collectively study these two conflicting varieties of textual content representations inside the decoder. Throughout the first go, we make the most of cross consideration and causal masking to study the caption technology job — the textual content options can attend to the picture options and predict the tokens in sequence. On the second go, we disable the cross-attention and causal masking to study the contrastive job. The textual content options is not going to see the picture options however can attend bidirectionally to all textual content tokens directly to provide the ultimate text-based illustration. Finishing this two-pass method inside the similar decoder permits for accommodating each varieties of duties that have been beforehand exhausting to reconcile. Whereas easy, we present that this mannequin structure is ready to present a basis for a number of multimodal duties.

|

| MaMMUT decoder-only two-pass studying allows each contrastive and generative studying paths by the identical mannequin. |

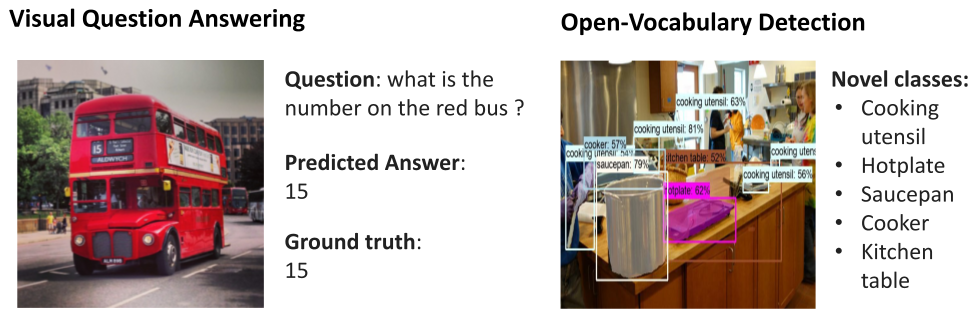

One other benefit of our structure is that, since it’s skilled for these disjoint duties, it may be seamlessly utilized to a number of functions equivalent to image-text and text-image retrieval, VQA, and captioning.

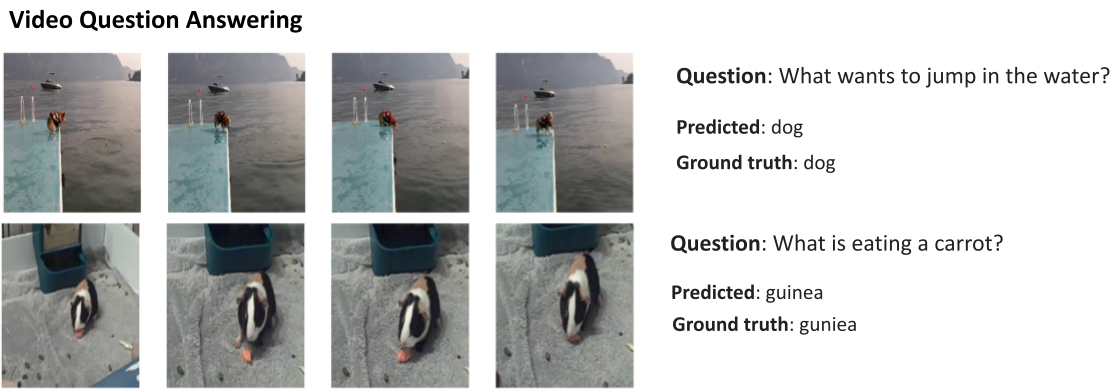

Furthermore, MaMMUT simply adapts to video-language duties. Earlier approaches used a imaginative and prescient encoder to course of every body individually, which required making use of it a number of instances. That is gradual and restricts the variety of frames the mannequin can deal with, sometimes to solely 6–8. With MaMMUT, we use sparse video tubes for light-weight adaptation immediately by way of the spatio-temporal info from the video. Moreover, adapting the mannequin to Open-Vocabulary Detection is completed by merely coaching to detect bounding-boxes by way of an object-detection head.

Outcomes

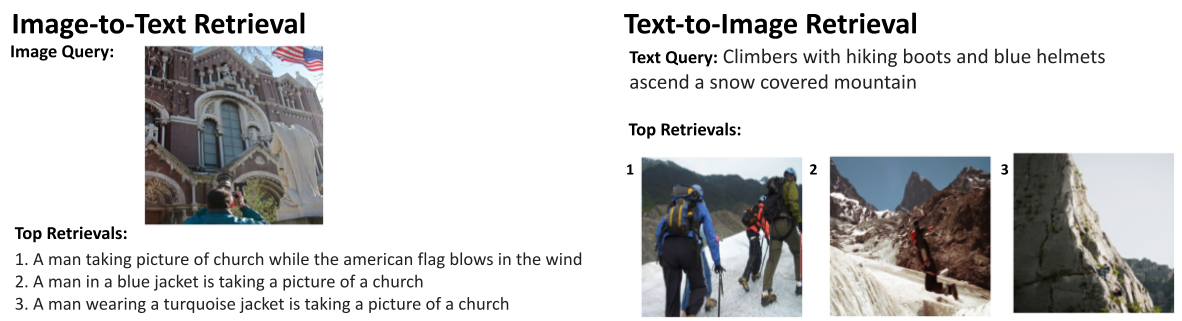

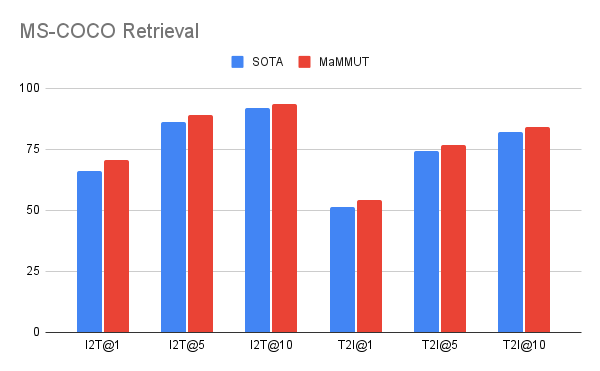

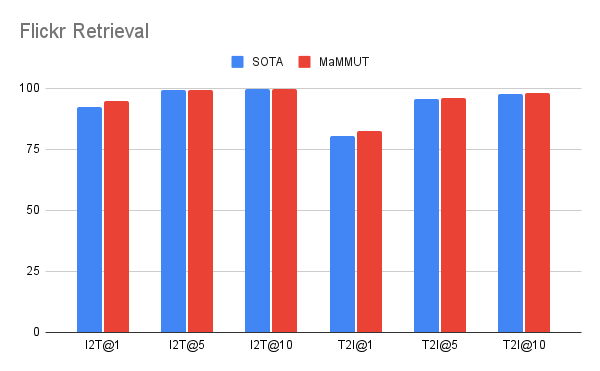

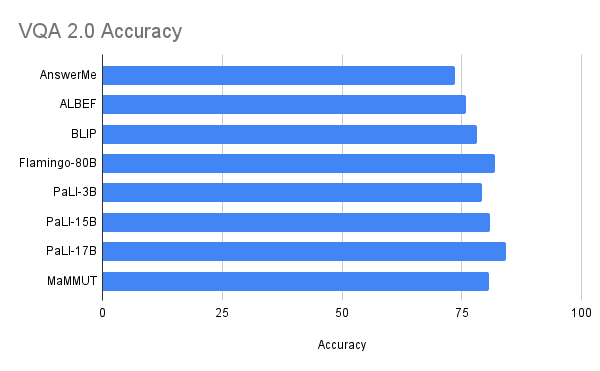

Our mannequin achieves wonderful zero-shot outcomes on image-text and text-image retrieval with none adaptation, outperforming all earlier state-of-the-art fashions. The outcomes on VQA are aggressive with state-of-the-art outcomes, that are achieved by a lot bigger fashions. The PaLI model (17B parameters) and the Flamingo model (80B) have the most effective efficiency on the VQA2.0 dataset, however MaMMUT (2B) has the identical accuracy because the 15B PaLI.

|

|

| MaMMUT outperforms the cutting-edge (SOTA) on Zero-Shot Picture-Textual content (I2T) and Textual content-Picture (T2I) retrieval on each MS-COCO (high) and Flickr (backside) benchmarks. |

|

| Efficiency on the VQA2.0 dataset is aggressive however doesn’t outperform giant fashions equivalent to Flamingo-80B and PalI-17B. Efficiency is evaluated within the tougher open-ended textual content technology setting. |

MaMMUT additionally outperforms the state-of-the-art on VideoQA, as proven under on the MSRVTT-QA and MSVD-QA datasets. Be aware that we outperform a lot greater fashions equivalent to Flamingo, which is particularly designed for picture+video pre-training and is pre-trained with each image-text and video-text information.

|

Our outcomes outperform the state-of-the-art on open-vocabulary detection fine-tuning as can be proven under.

Key components

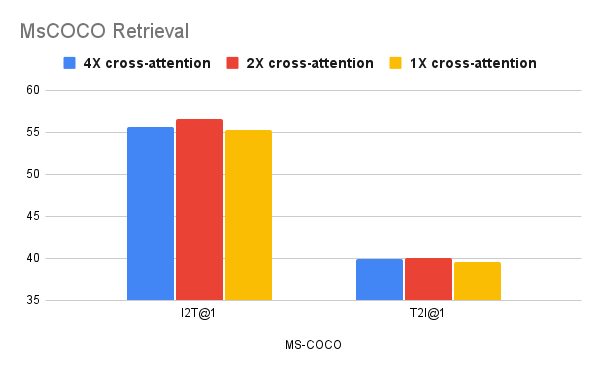

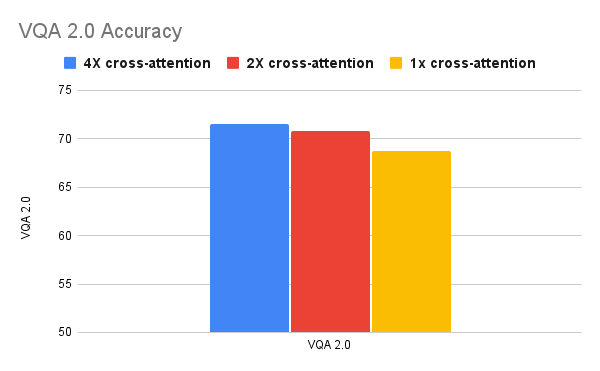

We present that joint coaching of each contrastive and text-generative goals will not be a straightforward job, and in our ablations we discover that these duties are served higher by completely different design decisions. We see that fewer cross-attention connections are higher for retrieval duties, however extra are most popular by VQA duties. But, whereas this exhibits that our mannequin’s design decisions may be suboptimal for particular person duties, our mannequin is simpler than extra complicated, or bigger, fashions.

|

|

| Ablation research exhibiting that fewer cross-attention connections (1-2) are higher for retrieval duties (high), whereas extra connections favor text-generative duties equivalent to VQA (backside). |

Conclusion

We introduced MaMMUT, a easy and compact vision-encoder language-decoder mannequin that collectively trains a lot of conflicting goals to reconcile contrastive-like and text-generative duties. Our mannequin additionally serves as a basis for a lot of extra vision-language duties, attaining state-of-the-art or aggressive efficiency on image-text and text-image retrieval, videoQA, video captioning, open-vocabulary detection and VQA. We hope it may be additional used for extra multimodal functions.

Acknowledgements

The work described is co-authored by: Weicheng Kuo, AJ Piergiovanni, Dahun Kim, Xiyang Luo, Ben Caine, Wei Li, Abhijit Ogale, Luowei Zhou, Andrew Dai, Zhifeng Chen, Claire Cui, and Anelia Angelova. We wish to thank Mojtaba Seyedhosseini, Vijay Vasudevan, Priya Goyal, Jiahui Yu, Zirui Wang, Yonghui Wu, Runze Li, Jie Mei, Radu Soricut, Qingqing Huang, Andy Ly, Nan Du, Yuxin Wu, Tom Duerig, Paul Natsev, Zoubin Ghahramani for his or her assist and assist.