Highly effective machine-learning algorithms generally known as imaginative and prescient and language fashions, which be taught to match textual content with photos, have proven exceptional outcomes when requested to generate captions or summarize movies.

Whereas these fashions excel at figuring out objects, they usually wrestle to grasp ideas, like object attributes or the association of things in a scene. For example, a imaginative and prescient and language mannequin may acknowledge the cup and desk in a picture, however fail to know that the cup is sitting on the desk.

Researchers from MIT, the MIT-IBM Watson AI Lab, and elsewhere have demonstrated a brand new method that makes use of computer-generated knowledge to assist imaginative and prescient and language fashions overcome this shortcoming.

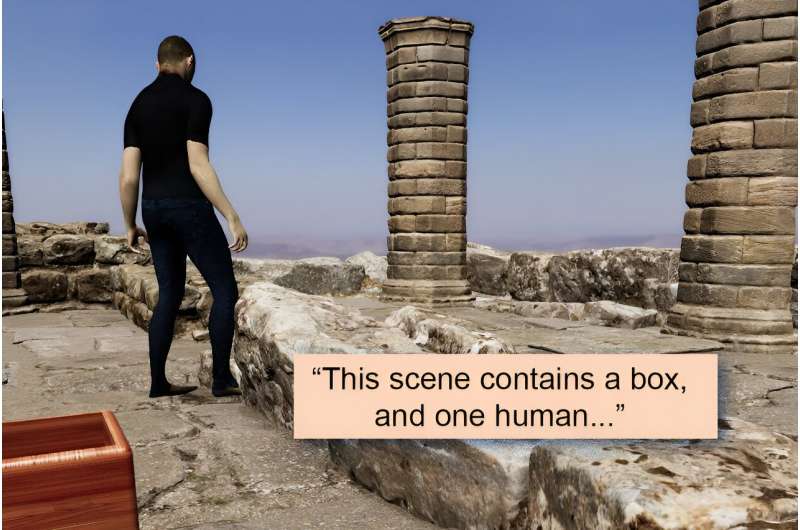

The researchers created an artificial dataset of photos that depict a variety of eventualities, object preparations, and human actions, coupled with detailed textual content descriptions. They used this annotated dataset to “repair” imaginative and prescient and language fashions to allow them to be taught ideas extra successfully. Their method ensures these fashions can nonetheless make correct predictions once they see actual photos.

After they examined fashions on idea understanding, the researchers discovered that their method boosted accuracy by as much as 10%. This might enhance programs that mechanically caption movies or improve fashions that present pure language solutions to questions on photos, with purposes in fields like e-commerce or well being care.

“With this work, we’re going past nouns within the sense that we’re going past simply the names of objects to extra of the semantic idea of an object and every thing round it. Our thought was that, when a machine-learning mannequin sees objects in many various preparations, it’s going to have a greater thought of how association issues in a scene,” says Khaled Shehada, a graduate scholar within the Division of Electrical Engineering and Pc Science and co-author of a paper on this method.

Shehada wrote the paper with lead writer Paola Cascante-Bonilla, a pc science graduate scholar at Rice College; Aude Oliva, director of strategic business engagement on the MIT Schwarzman School of Computing, MIT director of the MIT-IBM Watson AI Lab, and a senior analysis scientist within the Pc Science and Synthetic Intelligence Laboratory (CSAIL); senior writer Leonid Karlinsky, a analysis workers member within the MIT-IBM Watson AI Lab; and others at MIT, the MIT-IBM Watson AI Lab, Georgia Tech, Rice College, École des Ponts, Weizmann Institute of Science, and IBM Analysis. The paper can be offered on the Worldwide Convention on Pc Imaginative and prescient held in Paris October 2–6.

Specializing in objects

Imaginative and prescient and language fashions sometimes be taught to determine objects in a scene, and might find yourself ignoring object attributes, similar to coloration and dimension, or positional relationships, similar to which object is on high of one other object.

That is because of the methodology with which these fashions are sometimes educated, generally known as contrastive studying. This coaching methodology includes forcing a mannequin to foretell the correspondence between photos and textual content. When evaluating pure photos, the objects in every scene are inclined to trigger probably the most hanging variations. (Maybe one picture reveals a horse in a area whereas the second reveals a sailboat on the water.)

“Each picture may very well be uniquely outlined by the objects within the picture. So, if you do contrastive studying, simply specializing in the nouns and objects would resolve the issue. Why would the mannequin do something otherwise?” says Karlinsky.

The researchers sought to mitigate this drawback by utilizing artificial knowledge to fine-tune a imaginative and prescient and language mannequin. The fine-tuning course of includes tweaking a mannequin that has already been educated to enhance its efficiency on a selected activity.

They used a pc to mechanically create artificial movies with various 3D environments and objects, similar to furnishings and baggage, and added human avatars that interacted with the objects.

Utilizing particular person frames of those movies, they generated almost 800,000 photorealistic photos, after which paired every with an in depth caption. The researchers developed a technique for annotating each facet of the picture to seize object attributes, positional relationships, and human-object interactions clearly and persistently in dense captions.

As a result of the researchers created the photographs, they may management the looks and place of objects, in addition to the gender, clothes, poses, and actions of the human avatars.

“Artificial knowledge permits numerous range. With actual photos, you may not have numerous elephants in a room, however with artificial knowledge, you may even have a pink elephant in a room with a human, in order for you,” Cascante-Bonilla says.

Artificial knowledge produce other benefits, too. They’re cheaper to generate than actual knowledge, but the photographs are extremely photorealistic. Additionally they protect privateness as a result of no actual people are proven within the photos. And, as a result of knowledge are produced mechanically by a pc, they are often generated rapidly in huge portions.

Through the use of completely different digicam viewpoints, or barely altering the positions or attributes of objects, the researchers created a dataset with a far wider number of eventualities than one would discover in a pure dataset.

High quality-tune, however remember

Nonetheless, when one fine-tunes a mannequin with artificial knowledge, there’s a danger that mannequin may “overlook” what it discovered when it was initially educated with actual knowledge.

The researchers employed a couple of strategies to forestall this drawback, similar to adjusting the artificial knowledge so colours, lighting, and shadows extra carefully match these present in pure photos. Additionally they made changes to the mannequin’s inner-workings after fine-tuning to additional cut back any forgetfulness.

Their artificial dataset and fine-tuning technique improved the flexibility of widespread imaginative and prescient and language fashions to precisely acknowledge ideas by as much as 10%. On the similar time, the fashions didn’t overlook what they’d already discovered.

Now that they’ve proven how artificial knowledge can be utilized to resolve this drawback, the researchers need to determine methods to enhance the visible high quality and variety of those knowledge, in addition to the underlying physics that makes artificial scenes look practical. As well as, they plan to check the boundaries of scalability, and examine whether or not mannequin enchancment begins to plateau with bigger and extra various artificial datasets.

Extra info:

Going Past Nouns With Imaginative and prescient & Language Fashions Utilizing Artificial Knowledge. olivalab.mit.edu/Papers/going_beyond_nouns.pdf

Massachusetts Institute of Expertise

This story is republished courtesy of MIT Information (net.mit.edu/newsoffice/), a preferred web site that covers information about MIT analysis, innovation and instructing.

Quotation:

Serving to pc imaginative and prescient and language fashions perceive what they see (2023, September 13)

retrieved 13 September 2023

from

This doc is topic to copyright. Aside from any honest dealing for the aim of personal examine or analysis, no

half could also be reproduced with out the written permission. The content material is offered for info functions solely.

#Serving to #pc #imaginative and prescient #language #fashions #perceive