This weblog put up is co-written with Marat Adayev and Dmitrii Evstiukhin from Provectus.

When machine studying (ML) fashions are deployed into manufacturing and employed to drive enterprise choices, the problem typically lies within the operation and administration of a number of fashions. Machine Studying Operations (MLOps) offers the technical resolution to this concern, helping organizations in managing, monitoring, deploying, and governing their fashions on a centralized platform.

At-scale, real-time picture recognition is a posh technical downside that additionally requires the implementation of MLOps. By enabling efficient administration of the ML lifecycle, MLOps may help account for numerous alterations in knowledge, fashions, and ideas that the event of real-time picture recognition purposes is related to.

One such software is EarthSnap, an AI-powered picture recognition software that permits customers to establish all varieties of vegetation and animals, utilizing the digital camera on their smartphone. EarthSnap was developed by Earth.com, a number one on-line platform for fanatics who’re passionate in regards to the setting, nature, and science.

Earth.com’s management workforce acknowledged the huge potential of EarthSnap and got down to create an software that makes use of the newest deep studying (DL) architectures for laptop imaginative and prescient (CV). Nonetheless, they confronted challenges in managing and scaling their ML system, which consisted of assorted siloed ML and infrastructure parts that needed to be maintained manually. They wanted a cloud platform and a strategic companion with confirmed experience in delivering production-ready AI/ML options, to shortly carry EarthSnap to the market. That’s the place Provectus, an AWS Premier Consulting Partner with competencies in Machine Studying, Knowledge & Analytics, and DevOps, stepped in.

This put up explains how Provectus and Earth.com had been in a position to improve the AI-powered picture recognition capabilities of EarthSnap, scale back engineering heavy lifting, and reduce administrative prices by implementing end-to-end ML pipelines, delivered as a part of a managed MLOps platform and managed AI providers.

Challenges confronted within the preliminary method

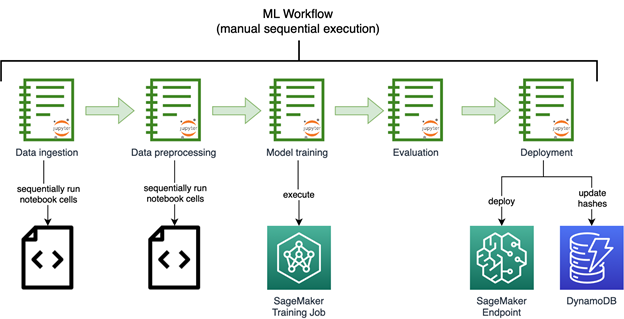

The manager workforce at Earth.com was desirous to speed up the launch of EarthSnap. They swiftly started to work on AI/ML capabilities by constructing picture recognition fashions utilizing Amazon SageMaker. The next diagram reveals the preliminary picture recognition ML workflow, run manually and sequentially.

The fashions developed by Earth.com lived throughout numerous notebooks. They required the guide sequential execution run of a collection of advanced notebooks to course of the information and retrain the mannequin. Endpoints needed to be deployed manually as properly.

Earth.com didn’t have an in-house ML engineering workforce, which made it exhausting so as to add new datasets that includes new species, launch and enhance new fashions, and scale their disjointed ML system.

The ML parts for knowledge ingestion, preprocessing, and mannequin coaching had been out there as disjointed Python scripts and notebooks, which required a variety of guide heavy lifting on the a part of engineers.

The preliminary resolution additionally required the assist of a technical third celebration, to launch new fashions swiftly and effectively.

First iteration of the answer

Provectus served as a worthwhile collaborator for Earth.com, taking part in an important function in augmenting the AI-driven picture recognition options of EarthSnap. The appliance’s workflows had been automated by implementing end-to-end ML pipelines, which had been delivered as a part of Provectus’s managed MLOps platform and supported by way of managed AI services.

A collection of undertaking discovery classes had been initiated by Provectus to look at EarthSnap’s current codebase and stock the pocket book scripts, with the objective of reproducing the prevailing mannequin outcomes. After the mannequin outcomes had been restored, the scattered parts of the ML workflow had been merged into an automatic ML pipeline utilizing Amazon SageMaker Pipelines, a purpose-built CI/CD service for ML.

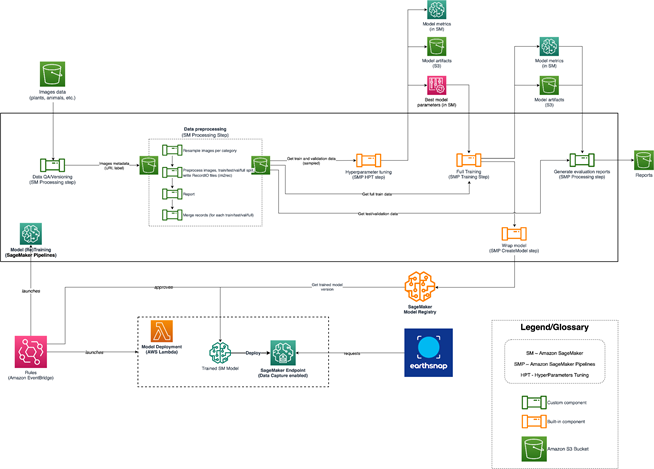

The ultimate pipeline contains the next parts:

- Knowledge QA & versioning – This step run as a SageMaker Processing job, ingests the supply knowledge from Amazon Easy Storage Service (Amazon S3) and prepares the metadata for the subsequent step, containing solely legitimate pictures (URI and label) which can be filtered in response to inside guidelines. It additionally persists a manifest file to Amazon S3, together with all obligatory info to recreate that dataset model.

- Knowledge preprocessing – This contains a number of steps wrapped as SageMaker processing jobs, and run sequentially. The steps preprocess the photographs, convert them to RecordIO format, break up the photographs into datasets (full, prepare, check and validation), and put together the photographs to be consumed by SageMaker coaching jobs.

- Hyperparameter tuning – A SageMaker hyperparameter tuning job takes as enter a subset of the coaching and validation set and runs a collection of small coaching jobs underneath the hood to find out the perfect parameters for the complete coaching job.

- Full coaching – A step SageMaker coaching job launches the coaching job on the complete knowledge, given the perfect parameters from the hyperparameter tuning step.

- Mannequin analysis – A step SageMaker processing job is run after the ultimate mannequin has been skilled. This step produces an expanded report containing the mannequin’s metrics.

- Mannequin creation – The SageMaker ModelCreate step wraps the mannequin into the SageMaker mannequin package deal and pushes it to the SageMaker mannequin registry.

All steps are run in an automatic method after the pipeline has been run. The pipeline might be run through any of following strategies:

- Mechanically utilizing AWS CodeBuild, after the brand new modifications are pushed to a main department and a brand new model of the pipeline is upserted (CI)

- Mechanically utilizing Amazon API Gateway, which might be triggered with a sure API name

- Manually in Amazon SageMaker Studio

After the pipeline run (launched utilizing one in every of previous strategies), a skilled mannequin is produced that is able to be deployed as a SageMaker endpoint. Which means the mannequin should first be authorized by the PM or engineer within the mannequin registry, then the mannequin is routinely rolled out to the stage setting utilizing Amazon EventBridge and examined internally. After the mannequin is confirmed to be working as anticipated, it’s deployed to the manufacturing setting (CD).

The Provectus resolution for EarthSnap might be summarized within the following steps:

- Begin with totally automated, end-to-end ML pipelines to make it simpler for Earth.com to launch new fashions

- Construct on high of the pipelines to ship a strong ML infrastructure for the MLOps platform, that includes all parts for streamlining AI/ML

- Assist the answer by offering managed AI services (together with ML infrastructure provisioning, upkeep, and price monitoring and optimization)

- Carry EarthSnap to its desired state (cellular software and backend) by way of a collection of engagements, together with AI/ML work, knowledge and database operations, and DevOps

After the foundational infrastructure and processes had been established, the mannequin was skilled and retrained on a bigger dataset. At this level, nevertheless, the workforce encountered a further concern when trying to increase the mannequin with even bigger datasets. We wanted to discover a approach to restructure the answer structure, making it extra refined and able to scaling successfully. The next diagram reveals the EarthSnap AI/ML structure.

The AI/ML structure for EarthSnap is designed round a collection of AWS providers:

- Sagemaker Pipeline runs utilizing one of many strategies talked about above (CodeBuild, API, guide) that trains the mannequin and produces artifacts and metrics. In consequence, the brand new model of the mannequin is pushed to the Sagemaker Mannequin registry

- Then the mannequin is reviewed by an inside workforce (PM/engineer) in mannequin registry and authorized/rejected primarily based on metrics offered

- As soon as the mannequin is authorized, the mannequin model is routinely deployed to the stage setting utilizing the Amazon EventBridge that tracks the mannequin standing change

- The mannequin is deployed to the manufacturing setting if the mannequin passes all checks within the stage setting

Remaining resolution

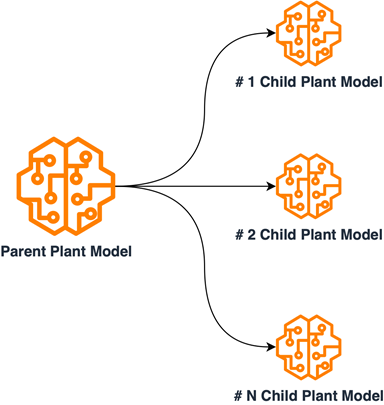

To accommodate all obligatory units of labels, the answer for EarthSnap’s mannequin required substantial modifications, as a result of incorporating all species inside a single mannequin proved to be each pricey and inefficient. The plant class was chosen first for implementation.

An intensive examination of plant knowledge was performed, to arrange it into subsets primarily based on shared inside traits. The answer for the plant mannequin was redesigned by implementing a multi-model mother or father/youngster structure. This was achieved by coaching youngster fashions on grouped subsets of plant knowledge and coaching the mother or father mannequin on a set of information samples from every subcategory. The Baby fashions had been employed for correct classification throughout the internally grouped species, whereas the mother or father mannequin was utilized to categorize enter plant pictures into subgroups. This design necessitated distinct coaching processes for every mannequin, resulting in the creation of separate ML pipelines. With this new design, together with the beforehand established ML/MLOps basis, the EarthSnap software was in a position to embody all important plant species, leading to improved effectivity regarding mannequin upkeep and retraining. The next diagram illustrates the logical scheme of mother or father/youngster mannequin relations.

Upon finishing the redesign, the final word problem was to ensure that the AI resolution powering EarthSnap might handle the substantial load generated by a broad consumer base. Thankfully, the managed AI onboarding course of encompasses all important automation, monitoring, and procedures for transitioning the answer right into a production-ready state, eliminating the necessity for any additional capital funding.

Outcomes

Regardless of the urgent requirement to develop and implement the AI-driven picture recognition options of EarthSnap inside a number of months, Provectus managed to fulfill all undertaking necessities throughout the designated time-frame. In simply 3 months, Provectus modernized and productionized the ML resolution for EarthSnap, guaranteeing that their cellular software was prepared for public launch.

The modernized infrastructure for ML and MLOps allowed Earth.com to cut back engineering heavy lifting and reduce the executive prices related to upkeep and assist of EarthSnap. By streamlining processes and implementing finest practices for CI/CD and DevOps, Provectus ensured that EarthSnap might obtain higher efficiency whereas enhancing its adaptability, resilience, and safety. With a give attention to innovation and effectivity, we enabled EarthSnap to perform flawlessly, whereas offering a seamless and user-friendly expertise for all customers.

As a part of its managed AI providers, Provectus was in a position to scale back the infrastructure administration overhead, set up well-defined SLAs and processes, guarantee 24/7 protection and assist, and improve total infrastructure stability, together with manufacturing workloads and important releases. We initiated a collection of enhancements to ship managed MLOps platform and increase ML engineering. Particularly, it now takes Earth.com minutes, as a substitute of a number of days, to launch new ML fashions for his or her AI-powered picture recognition software.

With help from Provectus, Earth.com was in a position to launch its EarthSnap software on the Apple Retailer and Playstore forward of schedule. The early launch signified the significance of Provectus’ complete work for the consumer.

”I’m extremely excited to work with Provectus. Phrases can’t describe how nice I really feel about handing over management of the technical aspect of enterprise to Provectus. It’s a large aid figuring out that I don’t have to fret about something aside from creating the enterprise aspect.”

– Eric Ralls, Founder and CEO of EarthSnap.

The subsequent steps of our cooperation will embrace: including superior monitoring parts to pipelines, enhancing mannequin retraining, and introducing a human-in-the-loop step.

Conclusion

The Provectus workforce hopes that Earth.com will proceed to modernize EarthSnap with us. We sit up for powering the corporate’s future enlargement, additional popularizing pure phenomena, and doing our half to guard our planet.

To study extra in regards to the Provectus ML infrastructure and MLOps, go to Machine Studying Infrastructure and watch the webinar for extra sensible recommendation. You too can study extra about Provectus managed AI providers on the Managed AI Providers.

In case you’re taken with constructing a strong infrastructure for ML and MLOps in your group, apply for the ML Acceleration Program to get began.

Provectus helps firms in healthcare and life sciences, retail and CPG, media and leisure, and manufacturing, obtain their aims by way of AI.

Provectus is an AWS Machine Studying Competency Associate and AI-first transformation consultancy and options supplier serving to design, architect, migrate, or construct cloud-native purposes on AWS.

Contact Provectus | Partner Overview

In regards to the Authors

Marat Adayev is an ML Options Architect at Provectus

Dmitrii Evstiukhin is the Director of Managed Providers at Provectus

James Burdon is a Senior Startups Options Architect at AWS