In at present’s quickly evolving healthcare panorama, medical doctors are confronted with huge quantities of medical information from varied sources, equivalent to caregiver notes, digital well being data, and imaging experiences. This wealth of data, whereas important for affected person care, may also be overwhelming and time-consuming for medical professionals to sift via and analyze. Effectively summarizing and extracting insights from this information is essential for higher affected person care and decision-making. Summarized affected person info may be helpful to quite a lot of downstream processes like information aggregation, successfully coding sufferers, or grouping sufferers with comparable diagnoses for evaluation.

Synthetic intelligence (AI) and machine studying (ML) fashions have proven nice promise in addressing these challenges. Fashions may be educated to research and interpret massive volumes of textual content information, successfully condensing info into concise summaries. By automating the summarization course of, medical doctors can shortly achieve entry to related info, permitting them to give attention to affected person care and make extra knowledgeable selections. See the next case study to be taught extra a couple of real-world use case.

Amazon SageMaker, a completely managed ML service, gives a super platform for internet hosting and implementing varied AI/ML-based summarization fashions and approaches. On this submit, we discover totally different choices for implementing summarization methods on SageMaker, together with utilizing Amazon SageMaker JumpStart basis fashions, fine-tuning pre-trained fashions from Hugging Face, and constructing customized summarization fashions. We additionally focus on the professionals and cons of every strategy, enabling healthcare professionals to decide on probably the most appropriate resolution for producing concise and correct summaries of complicated medical information.

Two vital phrases to know earlier than we start: pre-trained and fine-tuning. A pre-trained or basis mannequin is one which has been constructed and educated on a big corpus of information, sometimes for normal language information. Fantastic-tuning is the method by which a pre-trained mannequin is given one other extra domain-specific dataset with a purpose to improve its efficiency on a selected job. In a healthcare setting, this may imply giving the mannequin some information together with phrases and terminology pertaining particularly to affected person care.

Construct customized summarization fashions on SageMaker

Although probably the most high-effort strategy, some organizations would possibly want to construct customized summarization fashions on SageMaker from scratch. This strategy requires extra in-depth information of AI/ML fashions and will contain making a mannequin structure from scratch or adapting current fashions to swimsuit particular wants. Constructing customized fashions can provide larger flexibility and management over the summarization course of, but in addition requires extra time and sources in comparison with approaches that begin from pre-trained fashions. It’s important to weigh the advantages and disadvantages of this feature rigorously earlier than continuing, as a result of it might not be appropriate for all use circumstances.

SageMaker JumpStart basis fashions

An amazing choice for implementing summarization on SageMaker is utilizing JumpStart basis fashions. These fashions, developed by main AI analysis organizations, provide a spread of pre-trained language fashions optimized for varied duties, together with textual content summarization. SageMaker JumpStart gives two forms of basis fashions: proprietary fashions and open-source fashions. SageMaker JumpStart additionally gives HIPAA eligibility, making it helpful for healthcare workloads. It’s finally as much as the shopper to make sure compliance, so you’ll want to take the suitable steps. See Architecting for HIPAA Security and Compliance on Amazon Web Services for extra particulars.

Proprietary basis fashions

Proprietary fashions, equivalent to Jurassic fashions from AI21 and the Cohere Generate mannequin from Cohere, may be found via SageMaker JumpStart on the AWS Management Console and are presently beneath preview. Using proprietary fashions for summarization is right while you don’t have to fine-tune your mannequin on customized information. This presents an easy-to-use, out-of-the-box resolution that may meet your summarization necessities with minimal configuration. Through the use of the capabilities of those pre-trained fashions, it can save you time and sources that will in any other case be spent on coaching and fine-tuning a customized mannequin. Moreover, proprietary fashions sometimes include user-friendly APIs and SDKs, streamlining the mixing course of together with your current techniques and purposes. In case your summarization wants may be met by pre-trained proprietary fashions with out requiring particular customization or fine-tuning, they provide a handy, cost-effective, and environment friendly resolution to your textual content summarization duties. As a result of these fashions should not educated particularly for healthcare use circumstances, high quality can’t be assured for medical language out of the field with out fine-tuning.

Jurassic-2 Grande Instruct is a big language mannequin (LLM) by AI21 Labs, optimized for pure language directions and relevant to varied language duties. It presents an easy-to-use API and Python SDK, balancing high quality and affordability. Fashionable makes use of embrace producing advertising copy, powering chatbots, and textual content summarization.

On the SageMaker console, navigate to SageMaker JumpStart, discover the AI21 Jurassic-2 Grande Instruct mannequin, and select Check out mannequin.

If you wish to deploy the mannequin to a SageMaker endpoint that you just handle, you possibly can observe the steps on this pattern notebook, which reveals you the best way to deploy Jurassic-2 Giant utilizing SageMaker.

Open-source basis fashions

Open-source fashions embrace FLAN T5, Bloom, and GPT-2 fashions that may be found via SageMaker JumpStart within the Amazon SageMaker Studio UI, SageMaker JumpStart on the SageMaker console, and SageMaker JumpStart APIs. These fashions may be fine-tuned and deployed to endpoints beneath your AWS account, supplying you with full possession of mannequin weights and script codes.

Flan-T5 XL is a robust and versatile mannequin designed for a variety of language duties. By fine-tuning the mannequin together with your domain-specific information, you possibly can optimize its efficiency to your explicit use case, equivalent to textual content summarization or another NLP job. For particulars on the best way to fine-tune Flan-T5 XL utilizing the SageMaker Studio UI, seek advice from Instruction fine-tuning for FLAN T5 XL with Amazon SageMaker Jumpstart.

Fantastic-tuning pre-trained fashions with Hugging Face on SageMaker

One of the well-liked choices for implementing summarization on SageMaker is fine-tuning pre-trained fashions utilizing the Hugging Face Transformers library. Hugging Face gives a variety of pre-trained transformer fashions particularly designed for varied pure language processing (NLP) duties, together with textual content summarization. With the Hugging Face Transformers library, you possibly can simply fine-tune these pre-trained fashions in your domain-specific information utilizing SageMaker. This strategy has a number of benefits, equivalent to sooner coaching instances, higher efficiency on particular domains, and simpler mannequin packaging and deployment utilizing built-in SageMaker instruments and companies. In the event you’re unable to discover a appropriate mannequin in SageMaker JumpStart, you possibly can select any mannequin provided by Hugging Face and fine-tune it utilizing SageMaker.

To begin working with a mannequin to be taught in regards to the capabilities of ML, all you could do is open SageMaker Studio, discover a pre-trained mannequin you need to use within the Hugging Face Model Hub, and select SageMaker as your deployment methodology. Hugging Face will provide you with the code to repeat, paste, and run in your pocket book. It’s as straightforward as that! No ML engineering expertise required.

The Hugging Face Transformers library permits builders to function on the pre-trained fashions and do superior duties like fine-tuning, which we discover within the following sections.

Provision sources

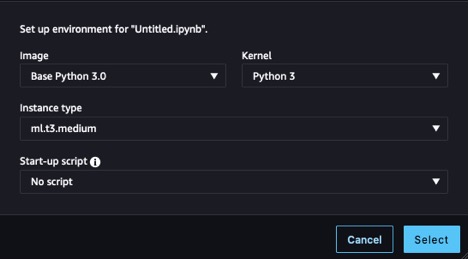

Earlier than we are able to start, we have to provision a pocket book. For directions, seek advice from Steps 1 and a pair of in Build and Train a Machine Learning Model Locally. For this instance, we used the settings proven within the following screenshot.

We additionally have to create an Amazon Simple Storage Service (Amazon S3) bucket to retailer the coaching information and coaching artifacts. For directions, seek advice from Creating a bucket.

Put together the dataset

To fine-tune our mannequin to have higher area information, we have to get information appropriate for the duty. When coaching for an enterprise use case, you’ll have to undergo quite a lot of information engineering duties to arrange your individual information to be prepared for coaching. These duties are outdoors the scope of this submit. For this instance, we’ve generated some artificial information to emulate nursing notes and saved it in Amazon S3. Storing our information in Amazon S3 permits us to architect our workloads for HIPAA compliance. We begin by getting these notes and loading them on the occasion the place our pocket book is operating:

The notes are composed of a column containing the total entry, observe, and a column containing a shortened model exemplifying what our desired output needs to be, abstract. The aim of utilizing this dataset is to enhance our mannequin’s organic and medical vocabulary in order that it’s extra attuned to summarizing in a healthcare context, known as area fine-tuning, and present our mannequin the best way to construction its summarized output. In some summarization circumstances, we might need to create an summary out of an article or a one-line synopsis of a evaluation, however on this case, we’re attempting to get our mannequin to output an abbreviated model of the signs and actions taken for a affected person up to now.

Load the mannequin

The mannequin we use as our basis is a model of Google’s Pegasus, made out there within the Hugging Face Hub, known as pegasus-xsum. It’s already pre-trained for summarization, so our fine-tuning course of can give attention to extending its area information. Modifying the duty our mannequin runs is a distinct sort of fine-tuning not lined on this submit. The Transformer library provides us with a category to load the mannequin definition from our model_checkpoint: google/pegasus-xsum. This can load the mannequin from the hub and instantiate it in our pocket book so we are able to use it afterward. As a result of pegasus-xsum is a sequence-to-sequence mannequin, we need to use the Seq2Seq sort of the AutoModel class:

Now that we have now our mannequin, it’s time to place our consideration to the opposite parts that may allow us to run our coaching loop.

Create a tokenizer

The primary of those parts is the tokenizer. Tokenization is the method by which phrases from the enter information are reworked into numerical representations that our mannequin can perceive. Once more, the Transformer library gives a category for us to load a tokenizer definition from the identical checkpoint we used to instantiate the mannequin:

With this tokenizer object, we are able to create a preprocessing perform and map it onto our dataset to offer us tokens able to be fed into the mannequin. Lastly, we format the tokenized output and take away the columns containing our unique textual content, as a result of the mannequin won’t be able to interpret them. Now we’re left with a tokenized enter able to be fed into the mannequin. See the next code:

With our information tokenized and our mannequin instantiated, we’re nearly able to run a coaching loop. The subsequent parts we need to create are the info collator and the optimizer. The information collator is one other class supplied by Hugging Face via the Transformers library, which we use to create batches of our tokenized information for coaching. We will simply construct this utilizing the tokenizer and mannequin objects we have already got simply by discovering the corresponding class sort we’ve used beforehand for our mannequin (Seq2Seq) for the collator class. The optimizer’s perform is to take care of the coaching state and replace the parameters primarily based on our coaching loss as we work via the loop. To create an optimizer, we are able to import the optim package deal from the torch module, the place quite a lot of optimization algorithms can be found. Some widespread ones you might have encountered earlier than are Stochastic Gradient Descent and Adam, the latter of the which is utilized in our instance. Adam’s constructor takes within the mannequin parameters and the parameterized studying charge for the given coaching run. See the next code:

The final steps earlier than we are able to start coaching are to construct the accelerator and the training charge scheduler. The accelerator comes from a distinct library (we’ve been primarily utilizing Transformers) produced by Hugging Face, aptly named Speed up, and can summary away logic required to handle gadgets throughout coaching (utilizing a number of GPUs for instance). For the ultimate element, we revisit the ever-useful Transformers library to implement our studying charge scheduler. By specifying the scheduler sort, the entire variety of coaching steps in our loop, and the beforehand created optimizer, the get_scheduler perform returns an object that allows us to regulate our preliminary studying charge all through the coaching course of:

We’re now totally arrange for coaching! Let’s arrange a coaching job, beginning by instantiating the training_args utilizing the Transformers library and selecting parameter values. We will go these, together with our different ready parts and dataset, on to the trainer and begin coaching, as proven within the following code. Relying on the dimensions of your dataset and chosen parameters, this will take a big period of time.

Bundle the mannequin for inference

After coaching has been run, the mannequin object is prepared for use for inference. As a finest observe, let’s save our work for future use. We have to create our mannequin artifacts, zip them collectively, and add our tarball to Amazon S3 for storage. To arrange our mannequin for zipping, we have to unwrap the now fine-tuned mannequin, then save the mannequin binary and related config recordsdata. We additionally want to avoid wasting our tokenizer to the identical listing that we saved our mannequin artifacts to so it’s out there once we use the mannequin for inference. Our model_dir folder ought to now look one thing like the next code:

All that’s left is to run a tar command to zip up our listing and add the tar.gz file to Amazon S3:

Our newly fine-tuned mannequin is now prepared and out there for use for inference.

Carry out inference

To make use of this mannequin artifact for inference, open a brand new file and use the next code, modifying the model_data parameter to suit your artifact save location in Amazon S3. The HuggingFaceModel constructor will rebuild our mannequin from the checkpoint we saved to mannequin.tar.gz, which we are able to then deploy for inference utilizing the deploy methodology. Deploying the endpoint will take a couple of minutes.

After the endpoint is deployed, we are able to use the predictor we’ve created to check it. Go the predict methodology a knowledge payload and run the cell, and also you’ll get the response out of your fine-tuned mannequin:

To see the advantage of fine-tuning a mannequin, let’s do a fast check. The next desk features a immediate and the outcomes of passing that immediate to the mannequin earlier than and after fine-tuning.

| Immediate | Response with No Fantastic-Tuning | Response with Fantastic-Tuning |

| Summarize the signs that the affected person is experiencing. Affected person is a forty five 12 months previous male with complaints of substernal chest ache radiating to the left arm. Ache is sudden onset whereas he was doing yard work, related to gentle shortness of breath and diaphoresis. On arrival affected person’s coronary heart charge was 120, respiratory charge 24, blood strain 170/95. 12 lead electrocardiogram executed on arrival to the emergency division and three sublingual nitroglycerin administered with out aid of chest ache. Electrocardiogram reveals ST elevation in anterior leads demonstrating acute anterior myocardial infarction. We’ve got contacted cardiac catheterization lab and prepping for cardiac catheterization by heart specialist. | We current a case of acute myocardial infarction. | Chest ache, anterior MI, PCI. |

As you possibly can see, our fine-tuned mannequin makes use of well being terminology otherwise, and we’ve been capable of change the construction of the response to suit our functions. Be aware that outcomes are dependent in your dataset and the design selections made throughout coaching. Your model of the mannequin may provide very totally different outcomes.

Clear up

Whenever you’re completed together with your SageMaker pocket book, you’ll want to shut it all the way down to keep away from prices from long-running sources. Be aware that shutting down the occasion will trigger you to lose any information saved within the occasion’s ephemeral reminiscence, so it’s best to save all of your work to persistent storage earlier than cleanup. Additionally, you will have to go to the Endpoints web page on the SageMaker console and delete any endpoints deployed for inference. To take away all artifacts, you additionally have to go to the Amazon S3 console to delete recordsdata uploaded to your bucket.

Conclusion

On this submit, we explored varied choices for implementing textual content summarization methods on SageMaker to assist healthcare professionals effectively course of and extract insights from huge quantities of medical information. We mentioned utilizing SageMaker Jumpstart basis fashions, fine-tuning pre-trained fashions from Hugging Face, and constructing customized summarization fashions. Every strategy has its personal benefits and disadvantages, catering to totally different wants and necessities.

Constructing customized summarization fashions on SageMaker permits for plenty of flexibility and management however requires extra time and sources than utilizing pre-trained fashions. SageMaker Jumpstart basis fashions present an easy-to-use and cost-effective resolution for organizations that don’t require particular customization or fine-tuning, in addition to some choices for simplified fine-tuning. Fantastic-tuning pre-trained fashions from Hugging Face presents sooner coaching instances, higher domain-specific efficiency, and seamless integration with SageMaker instruments and companies throughout a broad catalog of fashions, however it requires some implementation effort. On the time of penning this submit, Amazon has introduced an alternative choice, Amazon Bedrock, which is able to provide summarization capabilities in an much more managed atmosphere.

By understanding the professionals and cons of every strategy, healthcare professionals and organizations could make knowledgeable selections on probably the most appropriate resolution for producing concise and correct summaries of complicated medical information. In the end, utilizing AI/ML-based summarization fashions on SageMaker can considerably improve affected person care and decision-making by enabling medical professionals to shortly entry related info and give attention to offering high quality care.

Sources

For the total script mentioned on this submit and a few pattern information, seek advice from the GitHub repo. For extra info on the best way to run ML workloads on AWS, see the next sources:

In regards to the authors

Cody Collins is a New York primarily based Options Architect at Amazon Net Providers. He works with ISV clients to construct trade main options within the cloud. He has efficiently delivered complicated initiatives for numerous industries, optimizing effectivity and scalability. In his spare time, he enjoys studying, touring, and coaching jiu jitsu.

Cody Collins is a New York primarily based Options Architect at Amazon Net Providers. He works with ISV clients to construct trade main options within the cloud. He has efficiently delivered complicated initiatives for numerous industries, optimizing effectivity and scalability. In his spare time, he enjoys studying, touring, and coaching jiu jitsu.

Ameer Hakme is an AWS Options Architect residing in Pennsylvania. His skilled focus includes collaborating with Unbiased software program distributors all through the Northeast, guiding them in designing and setting up scalable, state-of-the-art platforms on the AWS Cloud.

Ameer Hakme is an AWS Options Architect residing in Pennsylvania. His skilled focus includes collaborating with Unbiased software program distributors all through the Northeast, guiding them in designing and setting up scalable, state-of-the-art platforms on the AWS Cloud.