Clients count on fast and environment friendly service from companies in at this time’s fast-paced world. However offering glorious customer support could be considerably difficult when the quantity of inquiries outpaces the human assets employed to handle them. Nonetheless, companies can meet this problem whereas offering personalised and environment friendly customer support with the developments in generative synthetic intelligence (generative AI) powered by giant language fashions (LLMs).

Generative AI chatbots have gained notoriety for his or her potential to mimic human mind. Nonetheless, not like task-oriented bots, these bots use LLMs for textual content evaluation and content material technology. LLMs are primarily based on the Transformer architecture, a deep studying neural community launched in June 2017 that may be educated on a large corpus of unlabeled textual content. This strategy creates a extra human-like dialog expertise and accommodates a number of matters.

As of this writing, firms of all sizes need to use this expertise however need assistance determining the place to start out. If you’re seeking to get began with generative AI and the usage of LLMs in conversational AI, this submit is for you. Now we have included a pattern undertaking to shortly deploy an Amazon Lex bot that consumes a pre-trained open-source LLM. The code additionally consists of the start line to implement a customized reminiscence supervisor. This mechanism permits an LLM to recall earlier interactions to maintain the dialog’s context and tempo. Lastly, it’s important to spotlight the significance of experimenting with fine-tuning prompts and LLM randomness and determinism parameters to acquire constant outcomes.

Answer overview

The answer integrates an Amazon Lex bot with a well-liked open-source LLM from Amazon SageMaker JumpStart, accessible via an Amazon SageMaker endpoint. We additionally use LangChain, a well-liked framework that simplifies LLM-powered purposes. Lastly, we use a QnABot to supply a person interface for our chatbot.

First, we begin by describing every element within the previous diagram:

- JumpStart affords pre-trained open-source fashions for varied drawback sorts. This allows you to start machine studying (ML) shortly. It consists of the FLAN-T5-XL model, an LLM deployed right into a deep studying container. It performs effectively on varied pure language processing (NLP) duties, together with textual content technology.

- A SageMaker real-time inference endpoint permits quick, scalable deployment of ML fashions for predicting occasions. With the flexibility to combine with Lambda capabilities, the endpoint permits for constructing customized purposes.

- The AWS Lambda operate makes use of the requests from the Amazon Lex bot or the QnABot to arrange the payload to invoke the SageMaker endpoint utilizing LangChain. LangChain is a framework that lets builders create purposes powered by LLMs.

- The Amazon Lex V2 bot has the built-in

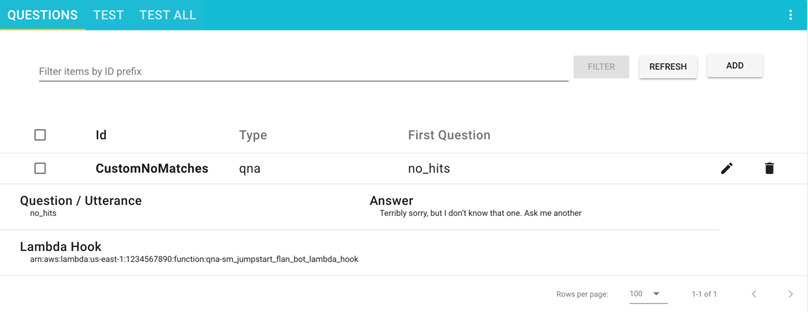

AMAZON.FallbackIntentintent sort. It’s triggered when a person’s enter doesn’t match any intents within the bot. - The QnABot is an open-source AWS answer to supply a person interface to Amazon Lex bots. We configured it with a Lambda hook operate for a

CustomNoMatchesmerchandise, and it triggers the Lambda operate when QnABot can’t discover a solution. We assume you will have already deployed it and included the steps to configure it within the following sections.

The answer is described at a excessive stage within the following sequence diagram.

Main duties carried out by the answer

On this part, we have a look at the main duties carried out in our answer. This answer’s complete undertaking supply code is obtainable to your reference on this GitHub repository.

Dealing with chatbot fallbacks

The Lambda operate handles the “don’t know” solutions through AMAZON.FallbackIntent in Amazon Lex V2 and the CustomNoMatches merchandise in QnABot. When triggered, this operate seems to be on the request for a session and the fallback intent. If there’s a match, it palms off the request to a Lex V2 dispatcher; in any other case, the QnABot dispatcher makes use of the request. See the next code:

Offering reminiscence to our LLM

To protect the LLM reminiscence in a multi-turn dialog, the Lambda operate features a LangChain custom memory class mechanism that makes use of the Amazon Lex V2 Sessions API to maintain monitor of the session attributes with the continued multi-turn dialog messages and to supply context to the conversational mannequin through earlier interactions. See the next code:

The next is the pattern code we created for introducing the customized reminiscence class in a LangChain ConversationChain:

Immediate definition

A immediate for an LLM is a query or assertion that units the tone for the generated response. Prompts operate as a type of context that helps direct the mannequin towards producing related responses. See the next code:

Utilizing an Amazon Lex V2 session for LLM reminiscence help

Amazon Lex V2 initiates a session when a person interacts to a bot. A session persists over time except manually stopped or timed out. A session shops metadata and application-specific information often called session attributes. Amazon Lex updates consumer purposes when the Lambda operate provides or adjustments session attributes. The QnABot consists of an interface to set and get session attributes on prime of Amazon Lex V2.

In our code, we used this mechanism to construct a customized reminiscence class in LangChain to maintain monitor of the dialog historical past and allow the LLM to recall short-term and long-term interactions. See the next code:

Conditions

To get began with the deployment, that you must fulfill the next conditions:

Deploy the answer

To deploy the answer, proceed with the next steps:

- Select Launch Stack to launch the answer within the

us-east-1Area:

- For Stack identify, enter a novel stack identify.

- For HFModel, we use the

Hugging Face Flan-T5-XLmannequin accessible on JumpStart. - For HFTask, enter

text2text. - Maintain S3BucketName as is.

These are used to seek out Amazon Simple Storage Service (Amazon S3) belongings wanted to deploy the answer and should change as updates to this submit are printed.

- Acknowledge the capabilities.

- Select Create stack.

There ought to be 4 efficiently created stacks.

Configure the Amazon Lex V2 bot

There may be nothing to do with the Amazon Lex V2 bot. Our CloudFormation template already did the heavy lifting.

Configure the QnABot

We assume you have already got an current QnABot deployed in your surroundings. However in the event you need assistance, observe these instructions to deploy it.

- On the AWS CloudFormation console, navigate to the principle stack that you just deployed.

- On the Outputs tab, make a remark of the

LambdaHookFunctionArnas a result of that you must insert it within the QnABot later.

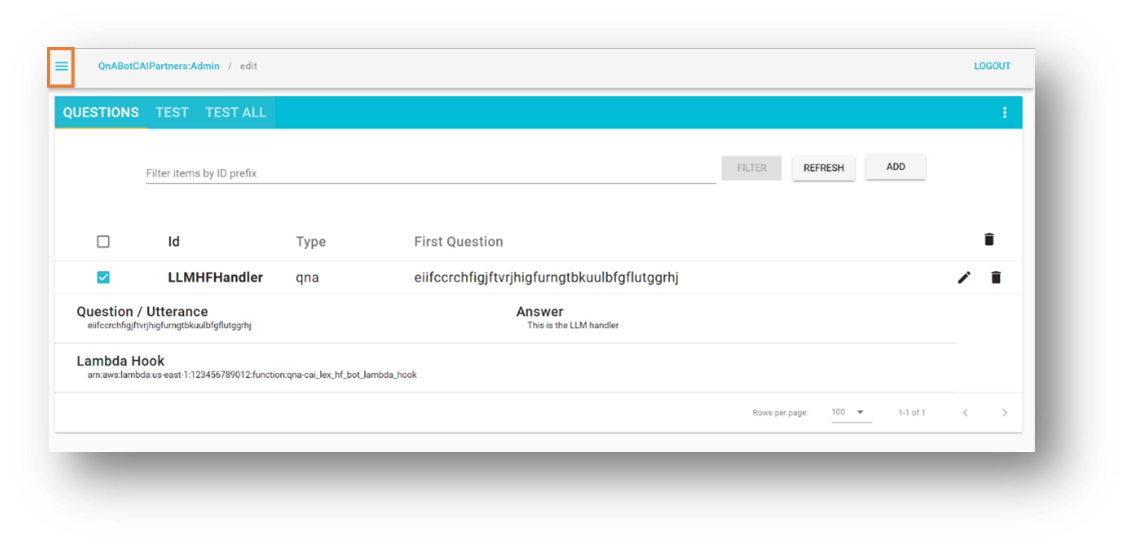

- Log in to the QnABot Designer Consumer Interface (UI) as an administrator.

- Within the Questions UI, add a brand new query.

- Enter the next values:

- ID –

CustomNoMatches - Query –

no_hits - Reply – Any default reply for “don’t know”

- ID –

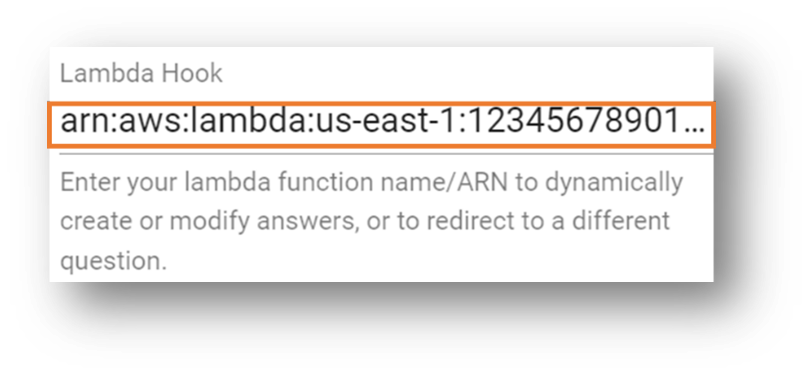

- Select Superior and go to the Lambda Hook part.

- Enter the Amazon Useful resource Identify (ARN) of the Lambda operate you famous beforehand.

- Scroll all the way down to the underside of the part and select Create.

You get a window with successful message.

Your query is now seen on the Questions web page.

Check the answer

Let’s proceed with testing the answer. First, it’s value mentioning that we deployed the FLAN-T5-XL mannequin offered by JumpStart with none fine-tuning. This will have some unpredictability, leading to slight variations in responses.

Check with an Amazon Lex V2 bot

This part helps you check the Amazon Lex V2 bot integration with the Lambda operate that calls the LLM deployed within the SageMaker endpoint.

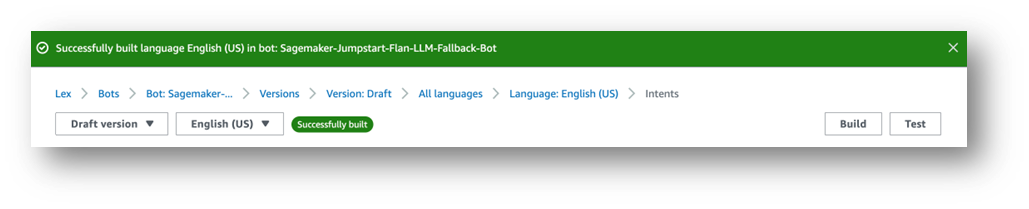

- On the Amazon Lex console, navigate to the bot entitled

Sagemaker-Jumpstart-Flan-LLM-Fallback-Bot.

This bot has been configured to name the Lambda operate that invokes the SageMaker endpoint internet hosting the LLM as a fallback intent when no different intents are matched. - Select Intents within the navigation pane.

On the highest proper, a message reads, “English (US) has not constructed adjustments.”

- Select Construct.

- Anticipate it to finish.

Lastly, you get successful message, as proven within the following screenshot.

- Select Check.

A chat window seems the place you’ll be able to work together with the mannequin.

We advocate exploring the built-in integrations between Amazon Lex bots and Amazon Connect. And in addition, messaging platforms (Fb, Slack, Twilio SMS) or third-party Contact Facilities utilizing Amazon Chime SDK and Genesys Cloud, for instance.

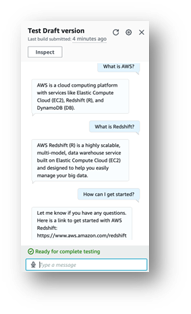

Check with a QnABot occasion

This part exams the QnABot on AWS integration with the Lambda operate that calls the LLM deployed within the SageMaker endpoint.

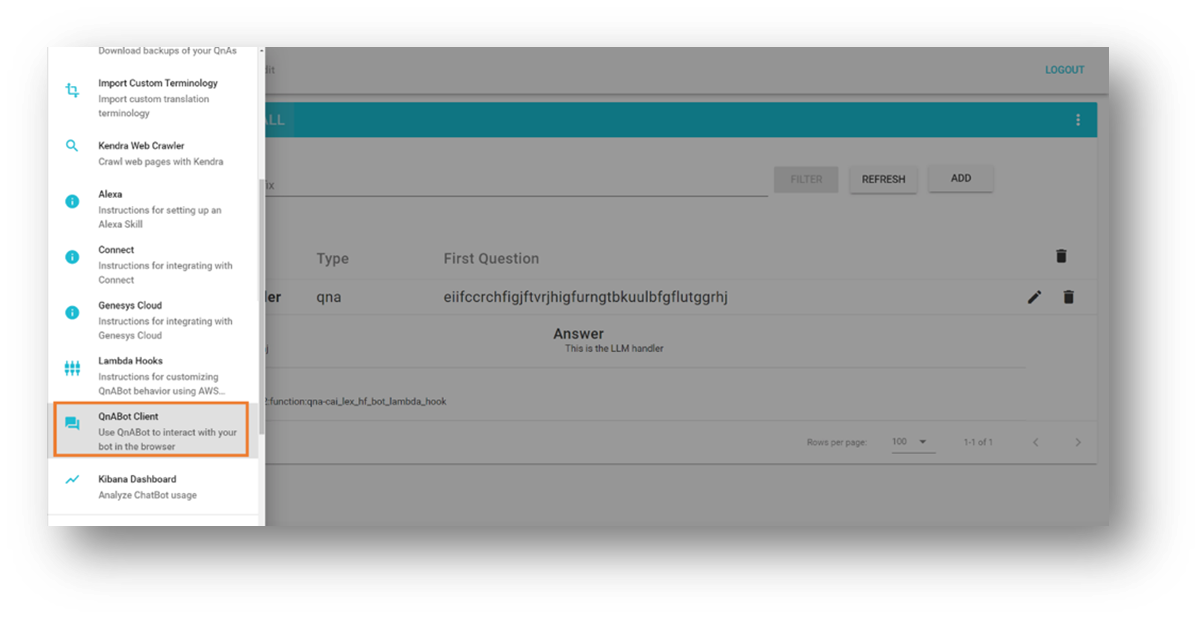

- Open the instruments menu within the prime left nook.

- Select QnABot Shopper.

- Select Signal In as Admin.

- Enter any query within the person interface.

- Consider the response.

Clear up

To keep away from incurring future prices, delete the assets created by our answer by following these steps:

- On the AWS CloudFormation console, choose the stack named

SagemakerFlanLLMStack(or the customized identify you set to the stack). - Select Delete.

- In the event you deployed the QnABot occasion to your exams, choose the QnABot stack.

- Select Delete.

Conclusion

On this submit, we explored the addition of open-domain capabilities to a task-oriented bot that routes the person requests to an open-source giant language mannequin.

We encourage you to:

- Save the dialog historical past to an exterior persistence mechanism. For instance, it can save you the dialog historical past to Amazon DynamoDB or an S3 bucket and retrieve it within the Lambda operate hook. On this method, you don’t have to depend on the inner non-persistent session attributes administration provided by Amazon Lex.

- Experiment with summarization – In multiturn conversations, it’s useful to generate a abstract that you need to use in your prompts so as to add context and restrict the utilization of dialog historical past. This helps to prune the bot session measurement and preserve the Lambda operate reminiscence consumption low.

- Experiment with immediate variations – Modify the unique immediate description that matches your experimentation functions.

- Adapt the language mannequin for optimum outcomes – You are able to do this by fine-tuning the superior LLM parameters similar to randomness (

temperature) and determinism (top_p) in accordance with your purposes. We demonstrated a pattern integration utilizing a pre-trained mannequin with pattern values, however have enjoyable adjusting the values to your use instances.

In our subsequent submit, we plan that can assist you uncover the right way to fine-tune pre-trained LLM-powered chatbots with your individual information.

Are you experimenting with LLM chatbots on AWS? Inform us extra within the feedback!

Sources and references

In regards to the Authors

Marcelo Silva is an skilled tech skilled who excels in designing, creating, and implementing cutting-edge merchandise. Beginning off his profession at Cisco, Marcelo labored on varied high-profile tasks together with deployments of the primary ever provider routing system and the profitable rollout of ASR9000. His experience extends to cloud expertise, analytics, and product administration, having served as senior supervisor for a number of firms like Cisco, Cape Networks, and AWS earlier than becoming a member of GenAI. At present working as a Conversational AI/GenAI Product Supervisor, Marcelo continues to excel in delivering modern options throughout industries.

Marcelo Silva is an skilled tech skilled who excels in designing, creating, and implementing cutting-edge merchandise. Beginning off his profession at Cisco, Marcelo labored on varied high-profile tasks together with deployments of the primary ever provider routing system and the profitable rollout of ASR9000. His experience extends to cloud expertise, analytics, and product administration, having served as senior supervisor for a number of firms like Cisco, Cape Networks, and AWS earlier than becoming a member of GenAI. At present working as a Conversational AI/GenAI Product Supervisor, Marcelo continues to excel in delivering modern options throughout industries.

Victor Rojo is a extremely skilled technologist who’s passionate in regards to the newest in AI, ML, and software program improvement. Along with his experience, he performed a pivotal position in bringing Amazon Alexa to the US and Mexico markets whereas spearheading the profitable launch of Amazon Textract and AWS Contact Middle Intelligence (CCI) to AWS Companions. As the present Principal Tech Chief for the Conversational AI Competency Companions program, Victor is dedicated to driving innovation and bringing cutting-edge options to satisfy the evolving wants of the trade.

Victor Rojo is a extremely skilled technologist who’s passionate in regards to the newest in AI, ML, and software program improvement. Along with his experience, he performed a pivotal position in bringing Amazon Alexa to the US and Mexico markets whereas spearheading the profitable launch of Amazon Textract and AWS Contact Middle Intelligence (CCI) to AWS Companions. As the present Principal Tech Chief for the Conversational AI Competency Companions program, Victor is dedicated to driving innovation and bringing cutting-edge options to satisfy the evolving wants of the trade.

Justin Leto is a Sr. Options Architect at Amazon Internet Companies with a specialization in machine studying. His ardour helps prospects harness the ability of machine studying and AI to drive enterprise progress. Justin has introduced at world AI conferences, together with AWS Summits, and lectured at universities. He leads the NYC machine studying and AI meetup. In his spare time, he enjoys offshore crusing and enjoying jazz. He lives in New York Metropolis together with his spouse and child daughter.

Justin Leto is a Sr. Options Architect at Amazon Internet Companies with a specialization in machine studying. His ardour helps prospects harness the ability of machine studying and AI to drive enterprise progress. Justin has introduced at world AI conferences, together with AWS Summits, and lectured at universities. He leads the NYC machine studying and AI meetup. In his spare time, he enjoys offshore crusing and enjoying jazz. He lives in New York Metropolis together with his spouse and child daughter.

Ryan Gomes is a Information & ML Engineer with the AWS Skilled Companies Intelligence Observe. He’s captivated with serving to prospects obtain higher outcomes via analytics and machine studying options within the cloud. Exterior work, he enjoys health, cooking, and spending high quality time with family and friends.

Ryan Gomes is a Information & ML Engineer with the AWS Skilled Companies Intelligence Observe. He’s captivated with serving to prospects obtain higher outcomes via analytics and machine studying options within the cloud. Exterior work, he enjoys health, cooking, and spending high quality time with family and friends.

Mahesh Birardar is a Sr. Options Architect at Amazon Internet Companies with specialization in DevOps and Observability. He enjoys serving to prospects implement cost-effective architectures that scale. Exterior work, he enjoys watching films and mountaineering.

Mahesh Birardar is a Sr. Options Architect at Amazon Internet Companies with specialization in DevOps and Observability. He enjoys serving to prospects implement cost-effective architectures that scale. Exterior work, he enjoys watching films and mountaineering.

Kanjana Chandren is a Options Architect at Amazon Internet Companies (AWS) who’s captivated with Machine Studying. She helps prospects in designing, implementing and managing their AWS workloads. Exterior of labor she loves travelling, studying and spending time with household and pals.

Kanjana Chandren is a Options Architect at Amazon Internet Companies (AWS) who’s captivated with Machine Studying. She helps prospects in designing, implementing and managing their AWS workloads. Exterior of labor she loves travelling, studying and spending time with household and pals.