Amazon SageMaker is an end-to-end machine studying (ML) platform with wide-ranging features to ingest, rework, and measure bias in information, and practice, deploy, and handle fashions in manufacturing with best-in-class compute and companies equivalent to Amazon SageMaker Data Wrangler, Amazon SageMaker Studio, Amazon SageMaker Canvas, Amazon SageMaker Model Registry, Amazon SageMaker Feature Store, Amazon SageMaker Pipelines, Amazon SageMaker Model Monitor, and Amazon SageMaker Clarify. Many organizations select SageMaker as their ML platform as a result of it gives a typical set of instruments for builders and information scientists. Plenty of AWS independent software vendor (ISV) partners have already constructed integrations for customers of their software program as a service (SaaS) platforms to make the most of SageMaker and its numerous options, together with coaching, deployment, and the mannequin registry.

On this put up, we cowl the advantages for SaaS platforms to combine with SageMaker, the vary of attainable integrations, and the method for growing these integrations. We additionally deep dive into the most typical architectures and AWS assets to facilitate these integrations. That is meant to speed up time-to-market for ISV companions and different SaaS suppliers constructing comparable integrations and encourage clients who’re customers of SaaS platforms to accomplice with SaaS suppliers on these integrations.

Advantages of integrating with SageMaker

There are an a variety of benefits for SaaS suppliers to combine their SaaS platforms with SageMaker:

- Customers of the SaaS platform can reap the benefits of a complete ML platform in SageMaker

- Customers can construct ML fashions with information that’s in or outdoors of the SaaS platform and exploit these ML fashions

- It gives customers with a seamless expertise between the SaaS platform and SageMaker

- Customers can make the most of basis fashions accessible in Amazon SageMaker JumpStart to construct generative AI functions

- Organizations can standardize on SageMaker

- SaaS suppliers can give attention to their core performance and provide SageMaker for ML mannequin improvement

- It equips SaaS suppliers with a foundation to construct joint options and go to market with AWS

SageMaker overview and integration choices

SageMaker has instruments for each step of the ML lifecycle. SaaS platforms can combine with SageMaker throughout the ML lifecycle from information labeling and preparation to mannequin coaching, internet hosting, monitoring, and managing fashions with numerous parts, as proven within the following determine. Relying on the wants, any and all elements of the ML lifecycle might be run in both the shopper AWS account or SaaS AWS account, and information and fashions might be shared throughout accounts utilizing AWS Identity and Access Management (IAM) insurance policies or third-party user-based entry instruments. This flexibility within the integration makes SageMaker a great platform for patrons and SaaS suppliers to standardize on.

Integration course of and architectures

On this part, we break the combination course of into 4 predominant phases and canopy the widespread architectures. Word that there might be different integration factors along with these, however these are much less widespread.

- Knowledge entry – How information that’s within the SaaS platform is accessed from SageMaker

- Mannequin coaching – How the mannequin is skilled

- Mannequin deployment and artifacts – The place the mannequin is deployed and what artifacts are produced

- Mannequin inference – How the inference occurs within the SaaS platform

The diagrams within the following sections assume SageMaker is working within the buyer AWS account. A lot of the choices defined are additionally relevant if SageMaker is working within the SaaS AWS account. In some circumstances, an ISV could deploy their software program within the buyer AWS account. That is normally in a devoted buyer AWS account, that means there nonetheless must be cross-account entry to the shopper AWS account the place SageMaker is working.

There are a couple of alternative ways through which authentication throughout AWS accounts might be achieved when information within the SaaS platform is accessed from SageMaker and when the ML mannequin is invoked from the SaaS platform. The really useful methodology is to make use of IAM roles. Another is to make use of AWS access keys consisting of an entry key ID and secret entry key.

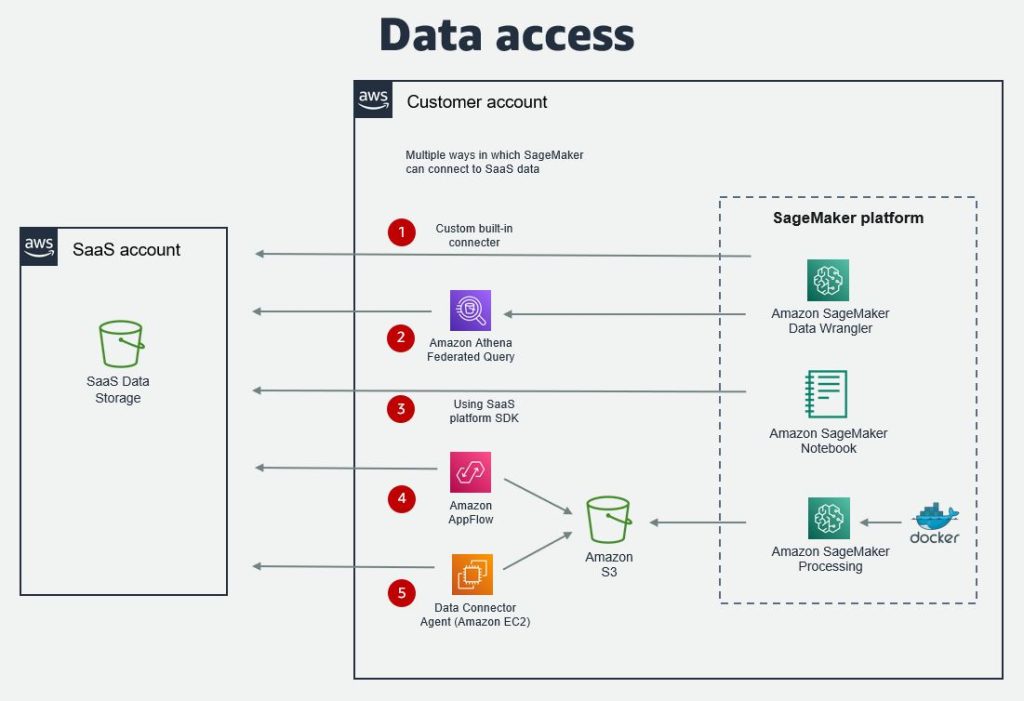

Knowledge entry

There are a number of choices on how information that’s within the SaaS platform might be accessed from SageMaker. Knowledge can both be accessed from a SageMaker pocket book, SageMaker Knowledge Wrangler, the place customers can put together information for ML, or SageMaker Canvas. The commonest information entry choices are:

- SageMaker Knowledge Wrangler built-in connector – The SageMaker Data Wrangler connector permits information to be imported from a SaaS platform to be ready for ML mannequin coaching. The connector is developed collectively by AWS and the SaaS supplier. Present SaaS platform connectors embody Databricks and Snowflake.

- Amazon Athena Federated Question for the SaaS platform – Federated queries allow customers to question the platform from a SageMaker pocket book through Amazon Athena utilizing a customized connector that’s developed by the SaaS supplier.

- Amazon AppFlow – With Amazon AppFlow, you need to use a customized connector to extract information into Amazon Simple Storage Service (Amazon S3) which subsequently might be accessed from SageMaker. The connector for a SaaS platform might be developed by AWS or the SaaS supplier. The open-source Custom Connector SDK permits the event of a non-public, shared, or public connector utilizing Python or Java.

- SaaS platform SDK – If the SaaS platform has an SDK (Software program Improvement Equipment), equivalent to a Python SDK, this can be utilized to entry information immediately from a SageMaker pocket book.

- Different choices – Along with these, there might be different choices relying on whether or not the SaaS supplier exposes their information through APIs, information or an agent. The agent might be put in on Amazon Elastic Compute Cloud (Amazon EC2) or AWS Lambda. Alternatively, a service equivalent to AWS Glue or a third-party extract, rework, and cargo (ETL) instrument can be utilized for information switch.

The next diagram illustrates the structure for information entry choices.

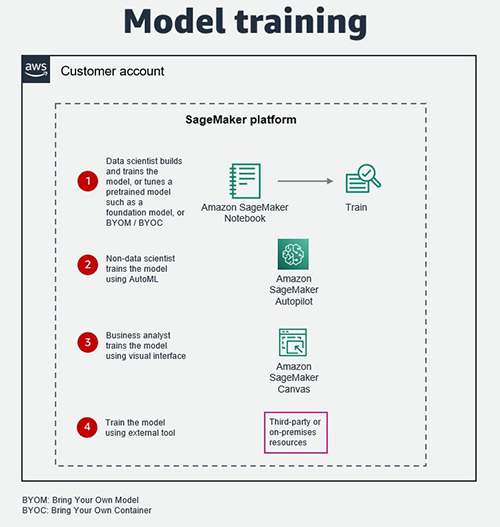

Mannequin coaching

The mannequin might be skilled in SageMaker Studio by a knowledge scientist, utilizing Amazon SageMaker Autopilot by a non-data scientist, or in SageMaker Canvas by a enterprise analyst. SageMaker Autopilot takes away the heavy lifting of constructing ML fashions, together with function engineering, algorithm choice, and hyperparameter settings, and it’s also comparatively simple to combine immediately right into a SaaS platform. SageMaker Canvas gives a no-code visible interface for coaching ML fashions.

As well as, Knowledge scientists can use pre-trained fashions accessible in SageMaker JumpStart, together with basis fashions from sources equivalent to Alexa, AI21 Labs, Hugging Face, and Stability AI, and tune them for their very own generative AI use circumstances.

Alternatively, the mannequin might be skilled in a third-party or partner-provided instrument, service, and infrastructure, together with on-premises assets, offered the mannequin artifacts are accessible and readable.

The next diagram illustrates these choices.

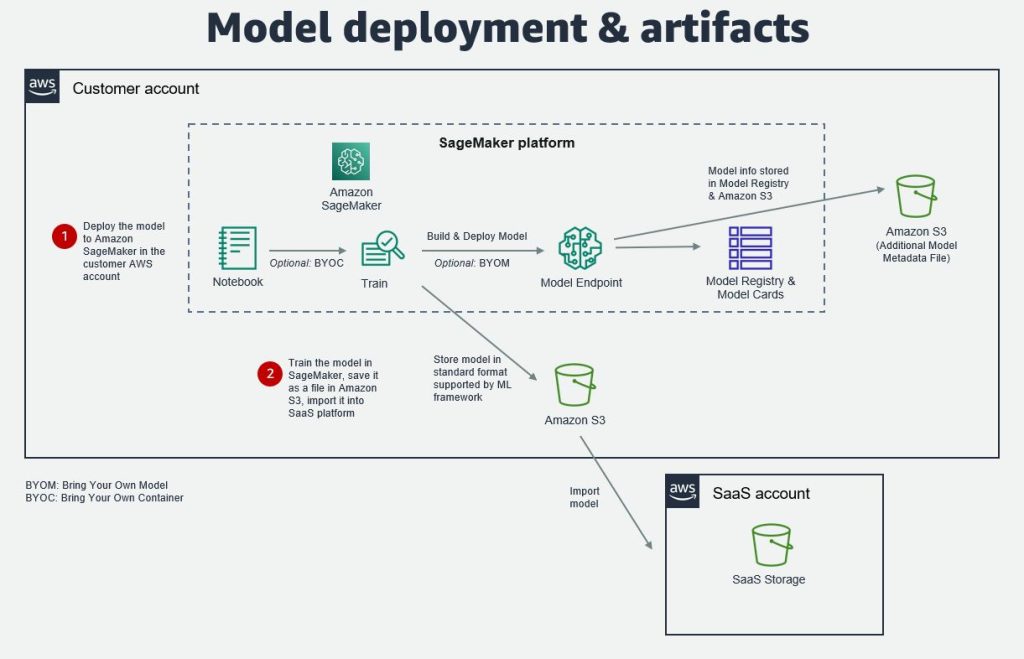

Mannequin deployment and artifacts

After you’ve gotten skilled and examined the mannequin, you’ll be able to both deploy it to a SageMaker mannequin endpoint within the buyer account, or export it from SageMaker and import it into the SaaS platform storage. The mannequin might be saved and imported in normal codecs supported by the widespread ML frameworks, equivalent to pickle, joblib, and ONNX (Open Neural Network Exchange).

If the ML mannequin is deployed to a SageMaker mannequin endpoint, extra mannequin metadata might be saved within the SageMaker Model Registry, SageMaker Model Cards, or in a file in an S3 bucket. This may be the mannequin model, mannequin inputs and outputs, mannequin metrics, mannequin creation date, inference specification, information lineage info, and extra. The place there isn’t a property accessible within the model package, the information might be saved as custom metadata or in an S3 file.

Creating such metadata may help SaaS suppliers handle the end-to-end lifecycle of the ML mannequin extra successfully. This info might be synced to the mannequin log within the SaaS platform and used to trace modifications and updates to the ML mannequin. Subsequently, this log can be utilized to find out whether or not to refresh downstream information and functions that use that ML mannequin within the SaaS platform.

The next diagram illustrates this structure.

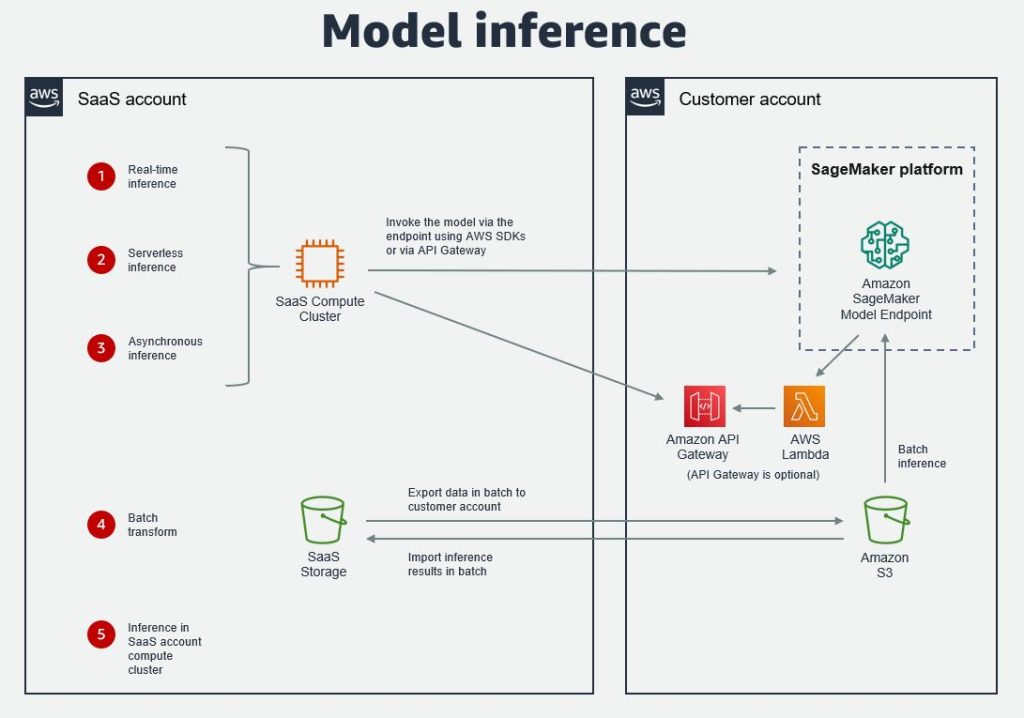

Mannequin inference

SageMaker presents 4 choices for ML model inference: real-time inference, serverless inference, asynchronous inference, and batch rework. For the primary three, the mannequin is deployed to a SageMaker mannequin endpoint and the SaaS platform invokes the mannequin utilizing the AWS SDKs. The really useful choice is to make use of the Python SDK. The inference sample for every of those is analogous in that the predictor’s predict() or predict_async() strategies are used. Cross-account entry might be achieved utilizing role-based access.

It’s additionally attainable to seal the backend with Amazon API Gateway, which calls the endpoint through a Lambda perform that runs in a protected non-public community.

For batch transform, information from the SaaS platform first must be exported in batch into an S3 bucket within the buyer AWS account, then the inference is finished on this information in batch. The inference is finished by first making a transformer job or object, after which calling the rework() methodology with the S3 location of the information. Outcomes are imported again into the SaaS platform in batch as a dataset, and joined to different datasets within the platform as a part of a batch pipeline job.

An alternative choice for inference is to do it immediately within the SaaS account compute cluster. This is able to be the case when the mannequin has been imported into the SaaS platform. On this case, SaaS suppliers can select from a range of EC2 instances which are optimized for ML inference.

The next diagram illustrates these choices.

Instance integrations

A number of ISVs have constructed integrations between their SaaS platforms and SageMaker. To study extra about some instance integrations, discuss with the next:

Conclusion

On this put up, we defined why and the way SaaS suppliers ought to combine SageMaker with their SaaS platforms by breaking the method into 4 elements and masking the widespread integration architectures. SaaS suppliers trying to construct an integration with SageMaker can make the most of these architectures. If there are any customized necessities past what has been coated on this put up, together with with different SageMaker parts, get in contact along with your AWS account groups. As soon as the combination has been constructed and validated, ISV companions can be a part of the AWS Service Ready Program for SageMaker and unlock a wide range of advantages.

We additionally ask clients who’re customers of SaaS platforms to register their curiosity in an integration with Amazon SageMaker with their AWS account groups, as this may help encourage and progress the event for SaaS suppliers.

In regards to the Authors

![]() Mehmet Bakkaloglu is a Principal Options Architect at AWS, specializing in Knowledge Analytics, AI/ML and ISV companions.

Mehmet Bakkaloglu is a Principal Options Architect at AWS, specializing in Knowledge Analytics, AI/ML and ISV companions.

![]() Raj Kadiyala is a Principal AI/ML Evangelist at AWS.

Raj Kadiyala is a Principal AI/ML Evangelist at AWS.