Adaptive computation refers back to the potential of a machine studying system to regulate its habits in response to modifications within the atmosphere. Whereas typical neural networks have a hard and fast operate and computation capability, i.e., they spend the identical variety of FLOPs for processing totally different inputs, a mannequin with adaptive and dynamic computation modulates the computational price range it dedicates to processing every enter, relying on the complexity of the enter.

Adaptive computation in neural networks is interesting for 2 key causes. First, the mechanism that introduces adaptivity gives an inductive bias that may play a key function in fixing some difficult duties. For example, enabling totally different numbers of computational steps for various inputs might be essential in fixing arithmetic issues that require modeling hierarchies of various depths. Second, it offers practitioners the power to tune the price of inference by means of better flexibility provided by dynamic computation, as these fashions might be adjusted to spend extra FLOPs processing a brand new enter.

Neural networks might be made adaptive through the use of totally different features or computation budgets for varied inputs. A deep neural community might be regarded as a operate that outputs a end result based mostly on each the enter and its parameters. To implement adaptive operate sorts, a subset of parameters are selectively activated based mostly on the enter, a course of known as conditional computation. Adaptivity based mostly on the operate kind has been explored in research on mixture-of-experts, the place the sparsely activated parameters for every enter pattern are decided by means of routing.

One other space of analysis in adaptive computation includes dynamic computation budgets. In contrast to in customary neural networks, comparable to T5, GPT-3, PaLM, and ViT, whose computation price range is mounted for various samples, recent research has demonstrated that adaptive computation budgets can enhance efficiency on duties the place transformers fall brief. Many of those works obtain adaptivity through the use of dynamic depth to allocate the computation price range. For instance, the Adaptive Computation Time (ACT) algorithm was proposed to offer an adaptive computational price range for recurrent neural networks. The Universal Transformer extends the ACT algorithm to transformers by making the computation price range depending on the variety of transformer layers used for every enter instance or token. Current research, like PonderNet, observe an analogous strategy whereas bettering the dynamic halting mechanisms.

Within the paper “Adaptive Computation with Elastic Input Sequence”, we introduce a brand new mannequin that makes use of adaptive computation, known as AdaTape. This mannequin is a Transformer-based structure that makes use of a dynamic set of tokens to create elastic enter sequences, offering a singular perspective on adaptivity compared to earlier works. AdaTape makes use of an adaptive tape studying mechanism to find out a various variety of tape tokens which can be added to every enter based mostly on enter’s complexity. AdaTape may be very easy to implement, gives an efficient knob to extend the accuracy when wanted, however can be far more environment friendly in comparison with other adaptive baselines as a result of it instantly injects adaptivity into the enter sequence as a substitute of the mannequin depth. Lastly, Adatape affords higher efficiency on customary duties, like picture classification, in addition to algorithmic duties, whereas sustaining a good high quality and price tradeoff.

Adaptive computation transformer with elastic enter sequence

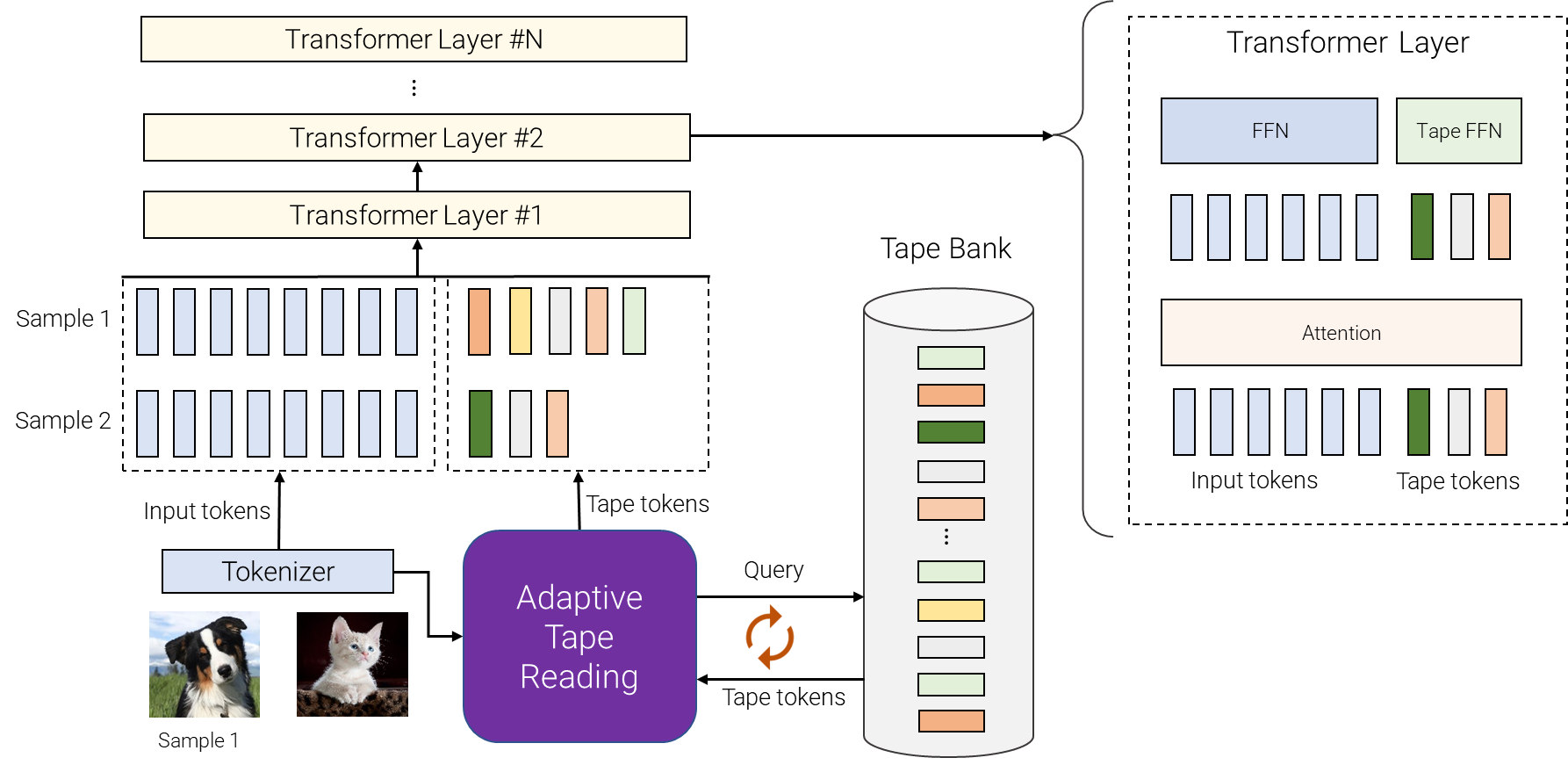

AdaTape makes use of each the adaptive operate sorts and a dynamic computation price range. Particularly, for a batch of enter sequences after tokenization (e.g., a linear projection of non-overlapping patches from a picture within the imaginative and prescient transformer), AdaTape makes use of a vector representing every enter to dynamically choose a variable-sized sequence of tape tokens.

AdaTape makes use of a financial institution of tokens, known as a “tape financial institution”, to retailer all of the candidate tape tokens that work together with the mannequin by means of the adaptive tape studying mechanism. We discover two totally different strategies for creating the tape financial institution: an input-driven financial institution and a learnable financial institution.

The overall concept of the input-driven financial institution is to extract a financial institution of tokens from the enter whereas using a unique strategy than the unique mannequin tokenizer for mapping the uncooked enter to a sequence of enter tokens. This allows dynamic, on-demand entry to data from the enter that’s obtained utilizing a unique viewpoint, e.g., a unique picture decision or a unique degree of abstraction.

In some instances, tokenization in a unique degree of abstraction shouldn’t be attainable, thus an input-driven tape financial institution shouldn’t be possible, comparable to when it is troublesome to additional break up every node in a graph transformer. To handle this situation, AdaTape affords a extra basic strategy for producing the tape financial institution through the use of a set of trainable vectors as tape tokens. This strategy is known as the learnable financial institution and might be seen as an embedding layer the place the mannequin can dynamically retrieve tokens based mostly on the complexity of the enter instance. The learnable financial institution allows AdaTape to generate a extra versatile tape financial institution, offering it with the power to dynamically modify its computation price range based mostly on the complexity of every enter instance, e.g., extra advanced examples retrieve extra tokens from the financial institution, which let the mannequin not solely use the data saved within the financial institution, but additionally spend extra FLOPs processing it, for the reason that enter is now bigger.

Lastly, the chosen tape tokens are appended to the unique enter and fed to the next transformer layers. For every transformer layer, the identical multi-head consideration is used throughout all enter and tape tokens. Nevertheless, two totally different feed-forward networks (FFN) are used: one for all tokens from the unique enter and the opposite for all tape tokens. We noticed barely higher high quality through the use of separate feed-forward networks for enter and tape tokens.

AdaTape gives useful inductive bias

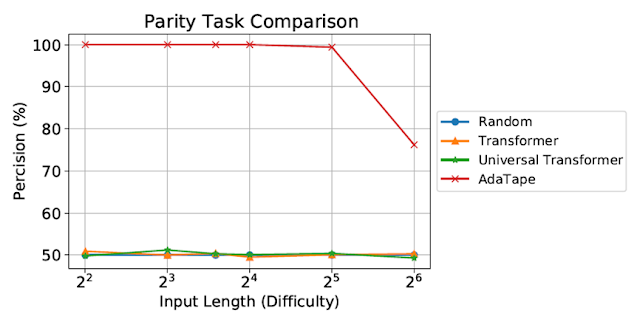

We consider AdaTape on parity, a really difficult job for the usual Transformer, to review the impact of inductive biases in AdaTape. With the parity job, given a sequence 1s, 0s, and -1s, the mannequin has to foretell the evenness or oddness of the variety of 1s within the sequence. Parity is the only non-counter-free or periodic regular language, however maybe surprisingly, the duty is unsolvable by the usual Transformer.

|

| Analysis on the parity job. The usual Transformer and Common Transformer had been unable to carry out this job, each exhibiting efficiency on the degree of a random guessing baseline. |

Regardless of being evaluated on brief, easy sequences, each the usual Transformer and Common Transformers had been unable to carry out the parity job as they’re unable to keep up a counter throughout the mannequin. Nevertheless, AdaTape outperforms all baselines, because it incorporates a light-weight recurrence inside its enter choice mechanism, offering an inductive bias that allows the implicit upkeep of a counter, which isn’t attainable in customary Transformers.

Analysis on picture classification

We additionally consider AdaTape on the picture classification job. To take action, we educated AdaTape on ImageNet-1K from scratch. The determine under exhibits the accuracy of AdaTape and the baseline strategies, together with A-ViT, and the Common Transformer ViT (UViT and U2T) versus their velocity (measured as variety of pictures, processed by every code, per second). When it comes to high quality and price tradeoff, AdaTape performs significantly better than the choice adaptive transformer baselines. When it comes to effectivity, bigger AdaTape fashions (when it comes to parameter depend) are quicker than smaller baselines. Such outcomes are in keeping with the discovering from previous work that exhibits that the adaptive mannequin depth architectures should not effectively suited for a lot of accelerators, just like the TPU.

|

| We consider AdaTape by coaching on ImageNet from scratch. For A-ViT, we not solely report their outcomes from the paper but additionally re-implement A-ViT by coaching from scratch, i.e., A-ViT(Ours). |

A examine of AdaTape’s habits

Along with its efficiency on the parity job and ImageNet-1K, we additionally evaluated the token choice habits of AdaTape with an input-driven financial institution on the JFT-300M validation set. To raised perceive the mannequin’s habits, we visualized the token choice outcomes on the input-driven financial institution as heatmaps, the place lighter colours imply that place is extra regularly chosen. The heatmaps reveal that AdaTape extra regularly picks the central patches. This aligns with our prior data, as central patches are usually extra informative — particularly within the context of datasets with pure pictures, the place the primary object is in the midst of the picture. This end result highlights the intelligence of AdaTape, as it could possibly successfully establish and prioritize extra informative patches to enhance its efficiency.

|

| We visualize the tape token choice heatmap of AdaTape-B/32 (left) and AdaTape-B/16 (proper). The warmer / lighter colour means the patch at this place is extra regularly chosen. |

Conclusion

AdaTape is characterised by elastic sequence lengths generated by the adaptive tape studying mechanism. This additionally introduces a brand new inductive bias that allows AdaTape to have the potential to resolve duties which can be difficult for each customary transformers and current adaptive transformers. By conducting complete experiments on picture recognition benchmarks, we show that AdaTape outperforms customary transformers and adaptive structure transformers when computation is held fixed.

Acknowledgments

One of many authors of this put up, Mostafa Dehghani, is now at Google DeepMind.